# Description

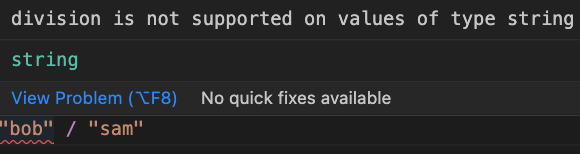

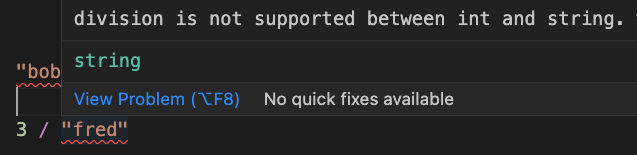

This improves the operation mismatch error in a few ways:

* We now detect if the left-hand side of the operation is at fault, and

show a simpler error/error message if it is

* Removed the unhelpful hint

* Updated the error text to make it clear what types are causing the

issue

# User-Facing Changes

Error texts and spans will change

# Tests + Formatting

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used -A

clippy::needless_collect` to check that you're using the standard code

style

- `cargo test --workspace` to check that all tests pass

- `cargo run -- crates/nu-utils/standard_library/tests.nu` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

# After Submitting

If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

# Description

Broken after #7415

We currently don't try to build the benchmarks in the CI thus this

slipped through the cracks.

# User-Facing Changes

None

# Tests + Formatting

Compile check is currently missing, but working towards that

I've been using the new Criterion benchmarks and I noticed that they

take a _long_ time to build before the benchmark can run. Turns out

`cargo build` was building 3 separate benchmarking binaries with most of

Nu's functionality in each one.

As a simple temporary fix, I've moved all the benchmarks into a single

file so that we only build 1 binary.

### Future work

Would be nice to split the unrelated benchmarks out into modules, but

when I did that a separate binary still got built for each one. I

suspect Criterion's macros are doing something funny with module or file

names. I've left a FIXME in the code to investigate this further.

A quick follow-up to https://github.com/nushell/nushell/pull/7686. This

adds benchmarks for evaluating `default_env.nu` and `default_config.nu`,

because evaluating config takes up the lion's share of Nushell's startup

time. The benchmarks will help us speed up Nu's startup and test

execution.

```

eval default_env.nu time: [4.2417 ms 4.2596 ms 4.2780 ms]

...

eval default_config.nu time: [1.9362 ms 1.9439 ms 1.9523 ms]

```

This PR sets up [Criterion](https://github.com/bheisler/criterion.rs)

for benchmarking in the main `nu` crate, and adds some simple parser

benchmarks.

To run the benchmarks, just do `cargo bench` or `cargo bench -- <regex

matching benchmark names>` in the repo root:

```bash

〉cargo bench -- parse

...

Running benches/parser_benchmark.rs (target/release/deps/parser_benchmark-75d224bac82d5b0b)

parse_default_env_file time: [221.17 µs 222.34 µs 223.61 µs]

Found 8 outliers among 100 measurements (8.00%)

5 (5.00%) high mild

3 (3.00%) high severe

parse_default_config_file

time: [1.4935 ms 1.4993 ms 1.5059 ms]

Found 11 outliers among 100 measurements (11.00%)

7 (7.00%) high mild

4 (4.00%) high severe

```

Existing benchmarks from `nu-plugin` have been moved into the main `nu`

crate to keep all our benchmarks in one place.