Compare commits

1 Commits

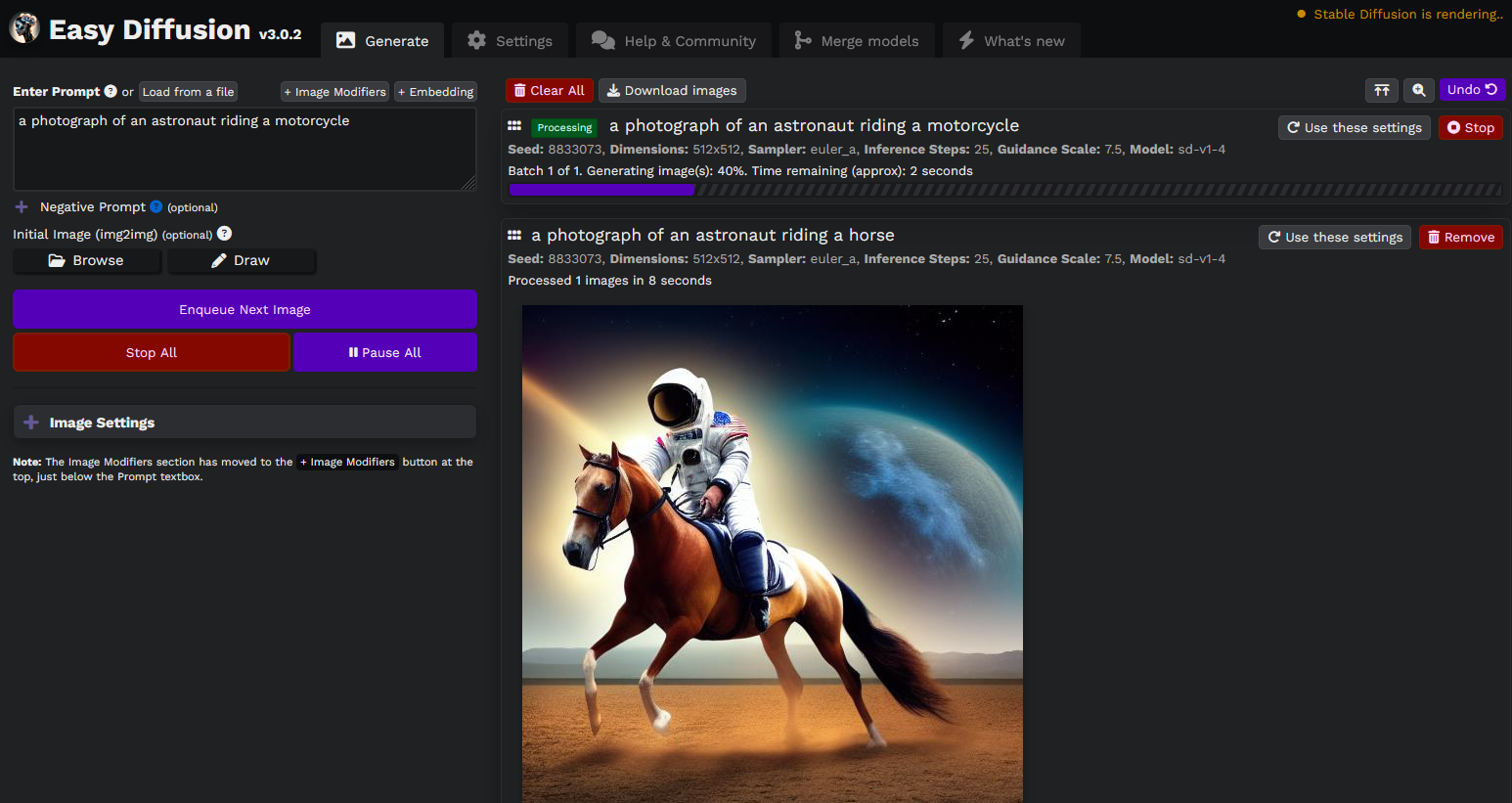

v3.0.2

...

revert-734

| Author | SHA1 | Date | |

|---|---|---|---|

| 645b596eb0 |

2

.github/FUNDING.yml

vendored

@ -1,3 +1,3 @@

|

||||

# These are supported funding model platforms

|

||||

|

||||

ko_fi: easydiffusion

|

||||

ko_fi: cmdr2_stablediffusion_ui

|

||||

|

||||

1

.gitignore

vendored

@ -3,4 +3,3 @@ installer

|

||||

installer.tar

|

||||

dist

|

||||

.idea/*

|

||||

node_modules/*

|

||||

@ -1,9 +0,0 @@

|

||||

*.min.*

|

||||

*.py

|

||||

*.json

|

||||

*.html

|

||||

/*

|

||||

!/ui

|

||||

/ui/easydiffusion

|

||||

!/ui/plugins

|

||||

!/ui/media

|

||||

@ -1,7 +0,0 @@

|

||||

{

|

||||

"printWidth": 120,

|

||||

"tabWidth": 4,

|

||||

"semi": false,

|

||||

"arrowParens": "always",

|

||||

"trailingComma": "es5"

|

||||

}

|

||||

1137

3rd-PARTY-LICENSES

152

CHANGES.md

@ -1,160 +1,24 @@

|

||||

# What's new?

|

||||

|

||||

## v3.0

|

||||

### Major Changes

|

||||

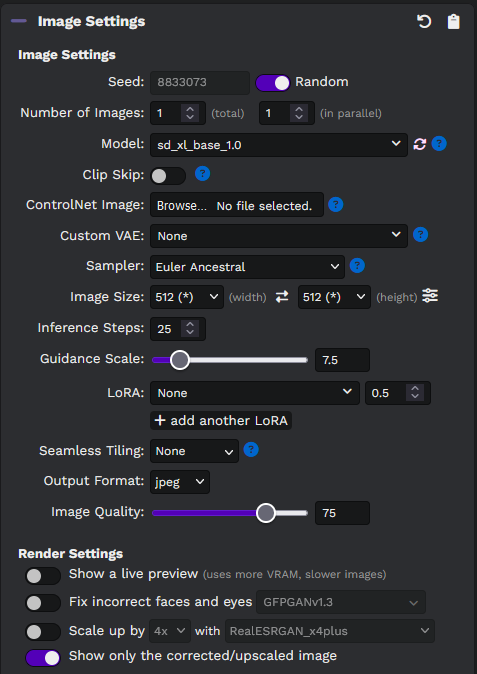

- **ControlNet** - Full support for ControlNet, with native integration of the common ControlNet models. Just select a control image, then choose the ControlNet filter/model and run. No additional configuration or download necessary. Supports custom ControlNets as well.

|

||||

- **SDXL** - Full support for SDXL. No configuration necessary, just put the SDXL model in the `models/stable-diffusion` folder.

|

||||

- **Multiple LoRAs** - Use multiple LoRAs, including SDXL and SD2-compatible LoRAs. Put them in the `models/lora` folder.

|

||||

- **Embeddings** - Use textual inversion embeddings easily, by putting them in the `models/embeddings` folder and using their names in the prompt (or by clicking the `+ Embeddings` button to select embeddings visually). Thanks @JeLuf.

|

||||

- **Seamless Tiling** - Generate repeating textures that can be useful for games and other art projects. Works best in 512x512 resolution. Thanks @JeLuf.

|

||||

- **Inpainting Models** - Full support for inpainting models, including custom inpainting models. No configuration (or yaml files) necessary.

|

||||

- **Faster than v2.5** - Nearly 40% faster than Easy Diffusion v2.5, and can be even faster if you enable xFormers.

|

||||

- **Even less VRAM usage** - Less than 2 GB for 512x512 images on 'low' VRAM usage setting (SD 1.5). Can generate large images with SDXL.

|

||||

- **WebP images** - Supports saving images in the lossless webp format.

|

||||

- **Undo/Redo in the UI** - Remove tasks or images from the queue easily, and undo the action if you removed anything accidentally. Thanks @JeLuf.

|

||||

- **Three new samplers, and latent upscaler** - Added `DEIS`, `DDPM` and `DPM++ 2m SDE` as additional samplers. Thanks @ogmaresca and @rbertus2000.

|

||||

- **Significantly faster 'Upscale' and 'Fix Faces' buttons on the images**

|

||||

- **Major rewrite of the code** - We've switched to using diffusers under-the-hood, which allows us to release new features faster, and focus on making the UI and installer even easier to use.

|

||||

|

||||

### Detailed changelog

|

||||

* 3.0.2 - 29 Aug 2023 - Fixed incorrect matching of embeddings from prompts.

|

||||

* 3.0.2 - 24 Aug 2023 - Fix broken seamless tiling.

|

||||

* 3.0.2 - 23 Aug 2023 - Fix styling on mobile devices.

|

||||

* 3.0.2 - 22 Aug 2023 - Full support for inpainting models, including custom models. Support SD 1.x and SD 2.x inpainting models. Does not require you to specify a yaml config file.

|

||||

* 3.0.2 - 22 Aug 2023 - Reduce VRAM consumption of controlnet in 'low' VRAM mode, and allow accelerating controlnets using xformers.

|

||||

* 3.0.2 - 22 Aug 2023 - Improve auto-detection of SD 2.0 and 2.1 models, removing the need for custom yaml files for SD 2.x models. Improve the model load time by speeding-up the black image test.

|

||||

* 3.0.1 - 18 Aug 2023 - Rotate an image if EXIF rotation is present. For e.g. this is common in images taken with a smartphone.

|

||||

* 3.0.1 - 18 Aug 2023 - Resize control images to the task dimensions, to avoid memory errors with high-res control images.

|

||||

* 3.0.1 - 18 Aug 2023 - Show controlnet filter preview in the task entry.

|

||||

* 3.0.1 - 18 Aug 2023 - Fix drag-and-drop and 'Use these Settings' for LoRA and ControlNet.

|

||||

* 3.0.1 - 18 Aug 2023 - Auto-save LoRA models and strengths.

|

||||

* 3.0.1 - 17 Aug 2023 - Automatically use the correct yaml config file for custom SDXL models, even if a yaml file isn't present in the folder.

|

||||

* 3.0.1 - 17 Aug 2023 - Fix broken embeddings with SDXL.

|

||||

* 3.0.1 - 16 Aug 2023 - Fix broken LoRA with SDXL.

|

||||

* 3.0.1 - 15 Aug 2023 - Fix broken seamless tiling.

|

||||

* 3.0.1 - 15 Aug 2023 - Fix textual inversion embeddings not working in `low` VRAM usage mode.

|

||||

* 3.0.1 - 15 Aug 2023 - Fix for custom VAEs not working in `low` VRAM usage mode.

|

||||

* 3.0.1 - 14 Aug 2023 - Slider to change the image dimensions proportionally (in Image Settings). Thanks @JeLuf.

|

||||

* 3.0.1 - 14 Aug 2023 - Show an error to the user if an embedding isn't compatible with the model, instead of failing silently without informing the user. Thanks @JeLuf.

|

||||

* 3.0.1 - 14 Aug 2023 - Disable watermarking for SDXL img2img. Thanks @AvidGameFan.

|

||||

* 3.0.0 - 3 Aug 2023 - Enabled diffusers for everyone by default. The old v2 engine can be used by disabling the "Use v3 engine" option in the Settings tab.

|

||||

|

||||

## v2.5

|

||||

### Major Changes

|

||||

- **Nearly twice as fast** - significantly faster speed of image generation. Code contributions are welcome to make our project even faster: https://github.com/easydiffusion/sdkit/#is-it-fast

|

||||

- **Mac M1/M2 support** - Experimental support for Mac M1/M2. Thanks @michaelgallacher, @JeLuf and vishae.

|

||||

- **AMD support for Linux** - Experimental support for AMD GPUs on Linux. Thanks @DianaNites and @JeLuf.

|

||||

- **Nearly twice as fast** - significantly faster speed of image generation. We're now pretty close to automatic1111's speed. Code contributions are welcome to make our project even faster: https://github.com/easydiffusion/sdkit/#is-it-fast

|

||||

- **Full support for Stable Diffusion 2.1 (including CPU)** - supports loading v1.4 or v2.0 or v2.1 models seamlessly. No need to enable "Test SD2", and no need to add `sd2_` to your SD 2.0 model file names. Works on CPU as well.

|

||||

- **Memory optimized Stable Diffusion 2.1** - you can now use Stable Diffusion 2.1 models, with the same low VRAM optimizations that we've always had for SD 1.4. Please note, the SD 2.0 and 2.1 models require more GPU and System RAM, as compared to the SD 1.4 and 1.5 models.

|

||||

- **11 new samplers!** - explore the new samplers, some of which can generate great images in less than 10 inference steps! We've added the Karras and UniPC samplers. Thanks @Schorny for the UniPC samplers.

|

||||

- **Model Merging** - You can now merge two models (`.ckpt` or `.safetensors`) and output `.ckpt` or `.safetensors` models, optionally in `fp16` precision. Details: https://github.com/easydiffusion/easydiffusion/wiki/Model-Merging . Thanks @JeLuf.

|

||||

- **Memory optimized Stable Diffusion 2.1** - you can now use 768x768 models for SD 2.1, with the same low VRAM optimizations that we've always had for SD 1.4. Please note, 4 GB graphics cards can still only support images upto 512x512 resolution.

|

||||

- **6 new samplers!** - explore the new samplers, some of which can generate great images in less than 10 inference steps!

|

||||

- **Model Merging** - You can now merge two models (`.ckpt` or `.safetensors`) and output `.ckpt` or `.safetensors` models, optionally in `fp16` precision. Details: https://github.com/cmdr2/stable-diffusion-ui/wiki/Model-Merging

|

||||

- **Fast loading/unloading of VAEs** - No longer needs to reload the entire Stable Diffusion model, each time you change the VAE

|

||||

- **Database of known models** - automatically picks the right configuration for known models. E.g. we automatically detect and apply "v" parameterization (required for some SD 2.0 models), and "fp32" attention precision (required for some SD 2.1 models).

|

||||

- **Color correction for img2img** - an option to preserve the color profile (histogram) of the initial image. This is especially useful if you're getting red-tinted images after inpainting/masking.

|

||||

- **Three GPU Memory Usage Settings** - `High` (fastest, maximum VRAM usage), `Balanced` (default - almost as fast, significantly lower VRAM usage), `Low` (slowest, very low VRAM usage). The `Low` setting is applied automatically for GPUs with less than 4 GB of VRAM.

|

||||

- **Find models in sub-folders** - This allows you to organize your models into sub-folders inside `models/stable-diffusion`, instead of keeping them all in a single folder. Thanks @patriceac and @ogmaresca.

|

||||

- **Custom Modifier Categories** - Ability to create custom modifiers with thumbnails, and custom categories (and hierarchy of categories). Details: https://github.com/easydiffusion/easydiffusion/wiki/Custom-Modifiers . Thanks @ogmaresca.

|

||||

- **Embed metadata, or save as TXT/JSON** - You can now embed the metadata directly into the images, or save them as text or json files (choose in the Settings tab). Thanks @patriceac.

|

||||

- **Find models in sub-folders** - This allows you to organize your models into sub-folders inside `models/stable-diffusion`, instead of keeping them all in a single folder.

|

||||

- **Save metadata as JSON** - You can now save the metadata files as either text or json files (choose in the Settings tab).

|

||||

- **Major rewrite of the code** - Most of the codebase has been reorganized and rewritten, to make it more manageable and easier for new developers to contribute features. We've separated our core engine into a new project called `sdkit`, which allows anyone to easily integrate Stable Diffusion (and related modules like GFPGAN etc) into their programming projects (via a simple `pip install sdkit`): https://github.com/easydiffusion/sdkit/

|

||||

- **Name change** - Last, and probably the least, the UI is now called "Easy Diffusion". It indicates the focus of this project - an easy way for people to play with Stable Diffusion.

|

||||

|

||||

Our focus continues to remain on an easy installation experience, and an easy user-interface. While still remaining pretty powerful, in terms of features and speed.

|

||||

|

||||

### Detailed changelog

|

||||

* 2.5.48 - 1 Aug 2023 - (beta-only) Full support for ControlNets. You can select a control image to guide the AI. You can pick a filter to pre-process the image, and one of the known (or custom) controlnet models. Supports `OpenPose`, `Canny`, `Straight Lines`, `Depth`, `Line Art`, `Scribble`, `Soft Edge`, `Shuffle` and `Segment`.

|

||||

* 2.5.47 - 30 Jul 2023 - An option to use `Strict Mask Border` while inpainting, to avoid touching areas outside the mask. But this might show a slight outline of the mask, which you will have to touch up separately.

|

||||

* 2.5.47 - 29 Jul 2023 - (beta-only) Fix long prompts with SDXL.

|

||||

* 2.5.47 - 29 Jul 2023 - (beta-only) Fix red dots in some SDXL images.

|

||||

* 2.5.47 - 29 Jul 2023 - Significantly faster `Fix Faces` and `Upscale` buttons (on the image). They no longer need to generate the image from scratch, instead they just upscale/fix the generated image in-place.

|

||||

* 2.5.47 - 28 Jul 2023 - Lots of internal code reorganization, in preparation for supporting Controlnets. No user-facing changes.

|

||||

* 2.5.46 - 27 Jul 2023 - (beta-only) Full support for SD-XL models (base and refiner)!

|

||||

* 2.5.45 - 24 Jul 2023 - (beta-only) Hide the samplers that won't be supported in the new diffusers version.

|

||||

* 2.5.45 - 22 Jul 2023 - (beta-only) Fix the recently-broken inpainting models.

|

||||

* 2.5.45 - 16 Jul 2023 - (beta-only) Fix the image quality of LoRAs, which had degraded in v2.5.44.

|

||||

* 2.5.44 - 15 Jul 2023 - (beta-only) Support for multiple LoRA files.

|

||||

* 2.5.43 - 9 Jul 2023 - (beta-only) Support for loading Textual Inversion embeddings. You can find the option in the Image Settings panel. Thanks @JeLuf.

|

||||

* 2.5.43 - 9 Jul 2023 - Improve the startup time of the UI.

|

||||

* 2.5.42 - 4 Jul 2023 - Keyboard shortcuts for the Image Editor. Thanks @JeLuf.

|

||||

* 2.5.42 - 28 Jun 2023 - Allow dropping images from folders to use as an Initial Image.

|

||||

* 2.5.42 - 26 Jun 2023 - Show a popup for Image Modifiers, allowing a larger screen space, better UX on mobile screens, and more room for us to develop and improve the Image Modifiers panel. Thanks @Hakorr.

|

||||

* 2.5.42 - 26 Jun 2023 - (beta-only) Show a welcome screen for users of the diffusers beta, with instructions on how to use the new prompt syntax, and known bugs. Thanks @JeLuf.

|

||||

* 2.5.42 - 26 Jun 2023 - Use YAML files for config. You can now edit the `config.yaml` file (using a text editor, like Notepad). This file is present inside the Easy Diffusion folder, and is easier to read and edit (for humans) than JSON. Thanks @JeLuf.

|

||||

* 2.5.41 - 24 Jun 2023 - (beta-only) Fix broken inpainting in low VRAM usage mode.

|

||||

* 2.5.41 - 24 Jun 2023 - (beta-only) Fix a recent regression where the LoRA would not get applied when changing SD models.

|

||||

* 2.5.41 - 23 Jun 2023 - Fix a regression where latent upscaler stopped working on PCs without a graphics card.

|

||||

* 2.5.41 - 20 Jun 2023 - Automatically fix black images if fp32 attention precision is required in diffusers.

|

||||

* 2.5.41 - 19 Jun 2023 - Another fix for multi-gpu rendering (in all VRAM usage modes).

|

||||

* 2.5.41 - 13 Jun 2023 - Fix multi-gpu bug with "low" VRAM usage mode while generating images.

|

||||

* 2.5.41 - 12 Jun 2023 - Fix multi-gpu bug with CodeFormer.

|

||||

* 2.5.41 - 6 Jun 2023 - Allow changing the strength of CodeFormer, and slightly improved styling of the CodeFormer options.

|

||||

* 2.5.41 - 5 Jun 2023 - Allow sharing an Easy Diffusion instance via https://try.cloudflare.com/ . You can find this option at the bottom of the Settings tab. Thanks @JeLuf.

|

||||

* 2.5.41 - 5 Jun 2023 - Show an option to download for tiled images. Shows a button on the generated image. Creates larger images by tiling them with the image generated by Easy Diffusion. Thanks @JeLuf.

|

||||

* 2.5.41 - 5 Jun 2023 - (beta-only) Allow LoRA strengths between -2 and 2. Thanks @ogmaresca.

|

||||

* 2.5.40 - 5 Jun 2023 - Reduce the VRAM usage of Latent Upscaling when using "balanced" VRAM usage mode.

|

||||

* 2.5.40 - 5 Jun 2023 - Fix the "realesrgan" key error when using CodeFormer with more than 1 image in a batch.

|

||||

* 2.5.40 - 3 Jun 2023 - Added CodeFormer as another option for fixing faces and eyes. CodeFormer tends to perform better than GFPGAN for many images. Thanks @patriceac for the implementation, and for contacting the CodeFormer team (who were supportive of it being integrated into Easy Diffusion).

|

||||

* 2.5.39 - 25 May 2023 - (beta-only) Seamless Tiling - make seamlessly tiled images, e.g. rock and grass textures. Thanks @JeLuf.

|

||||

* 2.5.38 - 24 May 2023 - Better reporting of errors, and show an explanation if the user cannot disable the "Use CPU" setting.

|

||||

* 2.5.38 - 23 May 2023 - Add Latent Upscaler as another option for upscaling images. Thanks @JeLuf for the implementation of the Latent Upscaler model.

|

||||

* 2.5.37 - 19 May 2023 - (beta-only) Two more samplers: DDPM and DEIS. Also disables the samplers that aren't working yet in the Diffusers version. Thanks @ogmaresca.

|

||||

* 2.5.37 - 19 May 2023 - (beta-only) Support CLIP-Skip. You can set this option under the models dropdown. Thanks @JeLuf.

|

||||

* 2.5.37 - 19 May 2023 - (beta-only) More VRAM optimizations for all modes in diffusers. The VRAM usage for diffusers in "low" and "balanced" should now be equal or less than the non-diffusers version. Performs softmax in half precision, like sdkit does.

|

||||

* 2.5.36 - 16 May 2023 - (beta-only) More VRAM optimizations for "balanced" VRAM usage mode.

|

||||

* 2.5.36 - 11 May 2023 - (beta-only) More VRAM optimizations for "low" VRAM usage mode.

|

||||

* 2.5.36 - 10 May 2023 - (beta-only) Bug fix for "meta" error when using a LoRA in 'low' VRAM usage mode.

|

||||

* 2.5.35 - 8 May 2023 - Allow dragging a zoomed-in image (after opening an image with the "expand" button). Thanks @ogmaresca.

|

||||

* 2.5.35 - 3 May 2023 - (beta-only) First round of VRAM Optimizations for the "Test Diffusers" version. This change significantly reduces the amount of VRAM used by the diffusers version during image generation. The VRAM usage is still not equal to the "non-diffusers" version, but more optimizations are coming soon.

|

||||

* 2.5.34 - 22 Apr 2023 - Don't start the browser in an incognito new profile (on Windows). Thanks @JeLuf.

|

||||

* 2.5.33 - 21 Apr 2023 - Install PyTorch 2.0 on new installations (on Windows and Linux).

|

||||

* 2.5.32 - 19 Apr 2023 - Automatically check for black images, and set full-precision if necessary (for attn). This means custom models based on Stable Diffusion v2.1 will just work, without needing special command-line arguments or editing of yaml config files.

|

||||

* 2.5.32 - 18 Apr 2023 - Automatic support for AMD graphics cards on Linux. Thanks @DianaNites and @JeLuf.

|

||||

* 2.5.31 - 10 Apr 2023 - Reduce VRAM usage while upscaling.

|

||||

* 2.5.31 - 6 Apr 2023 - Allow seeds upto `4,294,967,295`. Thanks @ogmaresca.

|

||||

* 2.5.31 - 6 Apr 2023 - Buttons to show the previous/next image in the image popup. Thanks @ogmaresca.

|

||||

* 2.5.30 - 5 Apr 2023 - Fix a bug where the JPEG image quality wasn't being respected when embedding the metadata into it. Thanks @JeLuf.

|

||||

* 2.5.30 - 1 Apr 2023 - (beta-only) Slider to control the strength of the LoRA model.

|

||||

* 2.5.30 - 28 Mar 2023 - Refactor task entry config to use a generating method. Added ability for plugins to easily add to this. Removed confusing sentence from `contributing.md`

|

||||

* 2.5.30 - 28 Mar 2023 - Allow the user to undo the deletion of tasks or images, instead of showing a pop-up each time. The new `Undo` button will be present at the top of the UI. Thanks @JeLuf.

|

||||

* 2.5.30 - 28 Mar 2023 - Support saving lossless WEBP images. Thanks @ogmaresca.

|

||||

* 2.5.30 - 28 Mar 2023 - Lots of bug fixes for the UI (Read LoRA flag in metadata files, new prompt weight format with scrollwheel, fix overflow with lots of tabs, clear button in image editor, shorter filenames in download). Thanks @patriceac, @JeLuf and @ogmaresca.

|

||||

* 2.5.29 - 27 Mar 2023 - (beta-only) Fix a bug where some non-square images would fail while inpainting with a `The size of tensor a must match size of tensor b` error.

|

||||

* 2.5.29 - 27 Mar 2023 - (beta-only) Fix the `incorrect number of channels` error, when given a PNG image with an alpha channel in `Test Diffusers`.

|

||||

* 2.5.29 - 27 Mar 2023 - (beta-only) Fix broken inpainting in `Test Diffusers`.

|

||||

* 2.5.28 - 24 Mar 2023 - (beta-only) Support for weighted prompts and long prompt lengths (not limited to 77 tokens). This change requires enabling the `Test Diffusers` setting in beta (in the Settings tab), and restarting the program.

|

||||

* 2.5.27 - 21 Mar 2023 - (beta-only) LoRA support, accessible by enabling the `Test Diffusers` setting (in the Settings tab in the UI). This change switches the internal engine to diffusers (if the `Test Diffusers` setting is enabled). If the `Test Diffusers` flag is disabled, it'll have no impact for the user.

|

||||

* 2.5.26 - 15 Mar 2023 - Allow styling the buttons displayed on an image. Update the API to allow multiple buttons and text labels in a single row. Thanks @ogmaresca.

|

||||

* 2.5.26 - 15 Mar 2023 - View images in full-screen, by either clicking on the image, or clicking the "Full screen" icon next to the Seed number on the image. Thanks @ogmaresca for the internal API.

|

||||

* 2.5.25 - 14 Mar 2023 - Button to download all the images, and all the metadata as a zip file. This is available at the top of the UI, as well as on each image. Thanks @JeLuf.

|

||||

* 2.5.25 - 14 Mar 2023 - Lots of UI tweaks and bug fixes. Thanks @patriceac and @JeLuf.

|

||||

* 2.5.24 - 11 Mar 2023 - Button to load an image mask from a file.

|

||||

* 2.5.24 - 10 Mar 2023 - Logo change. Image credit: @lazlo_vii.

|

||||

* 2.5.23 - 8 Mar 2023 - Experimental support for Mac M1/M2. Thanks @michaelgallacher, @JeLuf and vishae!

|

||||

* 2.5.23 - 8 Mar 2023 - Ability to create custom modifiers with thumbnails, and custom categories (and hierarchy of categories). More details - https://github.com/easydiffusion/easydiffusion/wiki/Custom-Modifiers . Thanks @ogmaresca.

|

||||

* 2.5.22 - 28 Feb 2023 - Minor styling changes to UI buttons, and the models dropdown.

|

||||

* 2.5.22 - 28 Feb 2023 - Lots of UI-related bug fixes. Thanks @patriceac.

|

||||

* 2.5.21 - 22 Feb 2023 - An option to control the size of the image thumbnails. You can use the `Display options` in the top-right corner to change this. Thanks @JeLuf.

|

||||

* 2.5.20 - 20 Feb 2023 - Support saving images in WEBP format (which consumes less disk space, with similar quality). Thanks @ogmaresca.

|

||||

* 2.5.20 - 18 Feb 2023 - A setting to block NSFW images from being generated. You can enable this setting in the Settings tab.

|

||||

* 2.5.19 - 17 Feb 2023 - Initial support for server-side plugins. Currently supports overriding the `get_cond_and_uncond()` function.

|

||||

* 2.5.18 - 17 Feb 2023 - 5 new samplers! UniPC samplers, some of which produce images in less than 15 steps. Thanks @Schorny.

|

||||

* 2.5.16 - 13 Feb 2023 - Searchable dropdown for models. This is useful if you have a LOT of models. You can type part of the model name, to auto-search through your models. Thanks @patriceac for the feature, and @AssassinJN for help in UI tweaks!

|

||||

* 2.5.16 - 13 Feb 2023 - Lots of fixes and improvements to the installer. First round of changes to add Mac support. Thanks @JeLuf.

|

||||

* 2.5.16 - 13 Feb 2023 - UI bug fixes for the inpainter editor. Thanks @patriceac.

|

||||

* 2.5.16 - 13 Feb 2023 - Fix broken task reorder. Thanks @JeLuf.

|

||||

* 2.5.16 - 13 Feb 2023 - Remove a task if all the images inside it have been removed. Thanks @AssassinJN.

|

||||

* 2.5.16 - 10 Feb 2023 - Embed metadata into the JPG/PNG images, if selected in the "Settings" tab (under "Metadata format"). Thanks @patriceac.

|

||||

* 2.5.16 - 10 Feb 2023 - Sort models alphabetically in the models dropdown. Thanks @ogmaresca.

|

||||

* 2.5.16 - 10 Feb 2023 - Support multiple GFPGAN models. Download new GFPGAN models into the `models/gfpgan` folder, and refresh the UI to use it. Thanks @JeLuf.

|

||||

* 2.5.16 - 10 Feb 2023 - Allow a server to enforce a fixed directory path to save images. This is useful if the server is exposed to a lot of users. This can be set in the `config.json` file as `force_save_path: "/path/to/fixed/save/dir"`. E.g. `force_save_path: "D:/user_images"`. Thanks @JeLuf.

|

||||

* 2.5.16 - 10 Feb 2023 - The "Make Images" button now shows the correct amount of images it'll create when using operators like `{}` or `|`. For e.g. if the prompt is `Photo of a {woman, man}`, then the button will say `Make 2 Images`. Thanks @JeLuf.

|

||||

* 2.5.16 - 10 Feb 2023 - A bunch of UI-related bug fixes. Thanks @patriceac.

|

||||

* 2.5.15 - 8 Feb 2023 - Allow using 'balanced' VRAM usage mode on GPUs with 4 GB or less of VRAM. This mode used to be called 'Turbo' in the previous version.

|

||||

* 2.5.14 - 8 Feb 2023 - Fix broken auto-save settings. We renamed `sampler` to `sampler_name`, which caused old settings to fail.

|

||||

* 2.5.14 - 6 Feb 2023 - Simplify the UI for merging models, and some other minor UI tweaks. Better error reporting if a model failed to load.

|

||||

* 2.5.14 - 3 Feb 2023 - Fix the 'Make Similar Images' button, which was producing incorrect images (weren't very similar).

|

||||

* 2.5.13 - 1 Feb 2023 - Fix the remaining GPU memory leaks, including a better fix (more comprehensive) for the change in 2.5.12 (27 Jan).

|

||||

* 2.5.12 - 27 Jan 2023 - Fix a memory leak, which made the UI unresponsive after an out-of-memory error. The allocated memory is now freed-up after an error.

|

||||

* 2.5.11 - 25 Jan 2023 - UI for Merging Models. Thanks @JeLuf. More info: https://github.com/easydiffusion/easydiffusion/wiki/Model-Merging

|

||||

* 2.5.10 - 24 Jan 2023 - Reduce the VRAM usage for img2img in 'balanced' mode (without reducing the rendering speed), to make it similar to v2.4 of this UI.

|

||||

* 2.5.9 - 23 Jan 2023 - Fix a bug where img2img would produce poorer-quality images for the same settings, as compared to version 2.4 of this UI.

|

||||

* 2.5.9 - 23 Jan 2023 - Reduce the VRAM usage for 'balanced' mode (without reducing the rendering speed), to make it similar to v2.4 of the UI.

|

||||

@ -183,8 +47,8 @@ Our focus continues to remain on an easy installation experience, and an easy us

|

||||

- **Automatic scanning for malicious model files** - using `picklescan`, and support for `safetensor` model format. Thanks @JeLuf

|

||||

- **Image Editor** - for drawing simple images for guiding the AI. Thanks @mdiller

|

||||

- **Use pre-trained hypernetworks** - for improving the quality of images. Thanks @C0bra5

|

||||

- **Support for custom VAE models**. You can place your VAE files in the `models/vae` folder, and refresh the browser page to use them. More info: https://github.com/easydiffusion/easydiffusion/wiki/VAE-Variational-Auto-Encoder

|

||||

- **Experimental support for multiple GPUs!** It should work automatically. Just open one browser tab per GPU, and spread your tasks across your GPUs. For e.g. open our UI in two browser tabs if you have two GPUs. You can customize which GPUs it should use in the "Settings" tab, otherwise let it automatically pick the best GPUs. Thanks @madrang . More info: https://github.com/easydiffusion/easydiffusion/wiki/Run-on-Multiple-GPUs

|

||||

- **Support for custom VAE models**. You can place your VAE files in the `models/vae` folder, and refresh the browser page to use them. More info: https://github.com/cmdr2/stable-diffusion-ui/wiki/VAE-Variational-Auto-Encoder

|

||||

- **Experimental support for multiple GPUs!** It should work automatically. Just open one browser tab per GPU, and spread your tasks across your GPUs. For e.g. open our UI in two browser tabs if you have two GPUs. You can customize which GPUs it should use in the "Settings" tab, otherwise let it automatically pick the best GPUs. Thanks @madrang . More info: https://github.com/cmdr2/stable-diffusion-ui/wiki/Run-on-Multiple-GPUs

|

||||

- **Cleaner UI design** - Show settings and help in new tabs, instead of dropdown popups (which were buggy). Thanks @mdiller

|

||||

- **Progress bar.** Thanks @mdiller

|

||||

- **Custom Image Modifiers** - You can now save your custom image modifiers! Your saved modifiers can include special characters like `{}, (), [], |`

|

||||

|

||||

@ -1,6 +1,6 @@

|

||||

Hi there, these instructions are meant for the developers of this project.

|

||||

|

||||

If you only want to use the Stable Diffusion UI, you've downloaded the wrong file. In that case, please download and follow the instructions at https://github.com/easydiffusion/easydiffusion#installation

|

||||

If you only want to use the Stable Diffusion UI, you've downloaded the wrong file. In that case, please download and follow the instructions at https://github.com/cmdr2/stable-diffusion-ui#installation

|

||||

|

||||

Thanks

|

||||

|

||||

@ -13,7 +13,7 @@ If you would like to contribute to this project, there is a discord for discussi

|

||||

This is in-flux, but one way to get a development environment running for editing the UI of this project is:

|

||||

(swap `.sh` or `.bat` in instructions depending on your environment, and be sure to adjust any paths to match where you're working)

|

||||

|

||||

1) Install the project to a new location using the [usual installation process](https://github.com/easydiffusion/easydiffusion#installation), e.g. to `/projects/stable-diffusion-ui-archive`

|

||||

1) Install the project to a new location using the [usual installation process](https://github.com/cmdr2/stable-diffusion-ui#installation), e.g. to `/projects/stable-diffusion-ui-archive`

|

||||

2) Start the newly installed project, and check that you can view and generate images on `localhost:9000`

|

||||

3) Next, please clone the project repository using `git clone` (e.g. to `/projects/stable-diffusion-ui-repo`)

|

||||

4) Close the server (started in step 2), and edit `/projects/stable-diffusion-ui-archive/scripts/on_env_start.sh` (or `on_env_start.bat`)

|

||||

@ -42,6 +42,8 @@ or for Windows

|

||||

10) Congrats, now any changes you make in your repo `ui` folder are linked to this running archive of the app and can be previewed in the browser.

|

||||

11) Please update CHANGES.md in your pull requests.

|

||||

|

||||

Check the `ui/frontend/build/README.md` for instructions on running and building the React code.

|

||||

|

||||

## Development environment for Installer changes

|

||||

Build the Windows installer using Windows, and the Linux installer using Linux. Don't mix the two, and don't use WSL. An Ubuntu VM is fine for building the Linux installer on a Windows host.

|

||||

|

||||

|

||||

@ -1,24 +1,24 @@

|

||||

Congrats on downloading Easy Diffusion, version 3!

|

||||

Congrats on downloading Stable Diffusion UI, version 2!

|

||||

|

||||

If you haven't downloaded Easy Diffusion yet, please download from https://github.com/easydiffusion/easydiffusion#installation

|

||||

If you haven't downloaded Stable Diffusion UI yet, please download from https://github.com/cmdr2/stable-diffusion-ui#installation

|

||||

|

||||

After downloading, to install please follow these instructions:

|

||||

|

||||

For Windows:

|

||||

- Please double-click the "Easy-Diffusion-Windows.exe" file and follow the instructions.

|

||||

- Please double-click the "Start Stable Diffusion UI.cmd" file inside the "stable-diffusion-ui" folder.

|

||||

|

||||

For Linux and Mac:

|

||||

- Please open a terminal, and go to the "easy-diffusion" directory. Then run ./start.sh

|

||||

For Linux:

|

||||

- Please open a terminal, and go to the "stable-diffusion-ui" directory. Then run ./start.sh

|

||||

|

||||

That file will automatically install everything. After that it will start the Easy Diffusion interface in a web browser.

|

||||

That file will automatically install everything. After that it will start the Stable Diffusion interface in a web browser.

|

||||

|

||||

To start Easy Diffusion in the future, please run the same command mentioned above.

|

||||

To start the UI in the future, please run the same command mentioned above.

|

||||

|

||||

|

||||

If you have any problems, please:

|

||||

1. Try the troubleshooting steps at https://github.com/easydiffusion/easydiffusion/wiki/Troubleshooting

|

||||

1. Try the troubleshooting steps at https://github.com/cmdr2/stable-diffusion-ui/wiki/Troubleshooting

|

||||

2. Or, seek help from the community at https://discord.com/invite/u9yhsFmEkB

|

||||

3. Or, file an issue at https://github.com/easydiffusion/easydiffusion/issues

|

||||

3. Or, file an issue at https://github.com/cmdr2/stable-diffusion-ui/issues

|

||||

|

||||

Thanks

|

||||

cmdr2 (and contributors to the project)

|

||||

cmdr2 (and contributors to the project)

|

||||

1

NSIS/.gitignore

vendored

@ -1 +0,0 @@

|

||||

*.exe

|

||||

|

Before Width: | Height: | Size: 565 KiB |

|

Before Width: | Height: | Size: 223 KiB |

|

Before Width: | Height: | Size: 454 KiB |

|

Before Width: | Height: | Size: 46 KiB |

@ -1 +0,0 @@

|

||||

!define EXISTING_INSTALLATION_DIR "D:\path\to\installed\easy-diffusion"

|

||||

@ -1,24 +1,20 @@

|

||||

; Script generated by the HM NIS Edit Script Wizard.

|

||||

|

||||

Target amd64-unicode

|

||||

Target x86-unicode

|

||||

Unicode True

|

||||

SetCompressor /FINAL lzma

|

||||

RequestExecutionLevel user

|

||||

!AddPluginDir /amd64-unicode "."

|

||||

!AddPluginDir /x86-unicode "."

|

||||

; HM NIS Edit Wizard helper defines

|

||||

!define PRODUCT_NAME "Easy Diffusion"

|

||||

!define PRODUCT_VERSION "2.5"

|

||||

!define PRODUCT_NAME "Stable Diffusion UI"

|

||||

!define PRODUCT_VERSION "Installer 2.35"

|

||||

!define PRODUCT_PUBLISHER "cmdr2 and contributors"

|

||||

!define PRODUCT_WEB_SITE "https://stable-diffusion-ui.github.io"

|

||||

!define PRODUCT_DIR_REGKEY "Software\Microsoft\Easy Diffusion\App Paths\installer.exe"

|

||||

!define PRODUCT_DIR_REGKEY "Software\Microsoft\Cmdr2\App Paths\installer.exe"

|

||||

|

||||

; MUI 1.67 compatible ------

|

||||

!include "MUI.nsh"

|

||||

!include "LogicLib.nsh"

|

||||

!include "nsDialogs.nsh"

|

||||

|

||||

!include "nsisconf.nsh"

|

||||

|

||||

Var Dialog

|

||||

Var Label

|

||||

Var Button

|

||||

@ -110,7 +106,7 @@ Function DirectoryLeave

|

||||

StrCpy $5 $INSTDIR 3

|

||||

System::Call 'Kernel32::GetVolumeInformation(t "$5",t,i ${NSIS_MAX_STRLEN},*i,*i,*i,t.r1,i ${NSIS_MAX_STRLEN})i.r0'

|

||||

${If} $0 <> 0

|

||||

${AndIf} $1 != "NTFS"

|

||||

${AndIf} $1 == "NTFS"

|

||||

MessageBox mb_ok "$5 has filesystem type '$1'.$\nOnly NTFS filesystems are supported.$\nPlease choose a different drive."

|

||||

Abort

|

||||

${EndIf}

|

||||

@ -144,7 +140,7 @@ Function MediaPackDialog

|

||||

Abort

|

||||

${EndIf}

|

||||

|

||||

${NSD_CreateLabel} 0 0 100% 48u "The Windows Media Feature Pack is missing on this computer. It is required for Easy Diffusion.$\nYou can continue the installation after installing the Windows Media Feature Pack."

|

||||

${NSD_CreateLabel} 0 0 100% 48u "The Windows Media Feature Pack is missing on this computer. It is required for the Stable Diffusion UI.$\nYou can continue the installation after installing the Windows Media Feature Pack."

|

||||

Pop $Label

|

||||

|

||||

${NSD_CreateButton} 10% 49u 80% 12u "Download Meda Feature Pack from Microsoft"

|

||||

@ -157,20 +153,16 @@ Function MediaPackDialog

|

||||

nsDialogs::Show

|

||||

FunctionEnd

|

||||

|

||||

Function FinishPageAction

|

||||

CreateShortCut "$DESKTOP\Easy Diffusion.lnk" "$INSTDIR\Start Stable Diffusion UI.cmd" "" "$INSTDIR\installer_files\cyborg_flower_girl.ico"

|

||||

FunctionEnd

|

||||

|

||||

;---------------------------------------------------------------------------------------------------------

|

||||

; MUI Settings

|

||||

;---------------------------------------------------------------------------------------------------------

|

||||

!define MUI_ABORTWARNING

|

||||

!define MUI_ICON "cyborg_flower_girl.ico"

|

||||

!define MUI_ICON "sd.ico"

|

||||

|

||||

!define MUI_WELCOMEFINISHPAGE_BITMAP "cyborg_flower_girl.bmp"

|

||||

!define MUI_WELCOMEFINISHPAGE_BITMAP "astro.bmp"

|

||||

|

||||

; Welcome page

|

||||

!define MUI_WELCOMEPAGE_TEXT "This installer will guide you through the installation of Easy Diffusion.$\n$\n\

|

||||

!define MUI_WELCOMEPAGE_TEXT "This installer will guide you through the installation of Stable Diffusion UI.$\n$\n\

|

||||

Click Next to continue."

|

||||

!insertmacro MUI_PAGE_WELCOME

|

||||

Page custom MediaPackDialog

|

||||

@ -186,11 +178,6 @@ Page custom MediaPackDialog

|

||||

!insertmacro MUI_PAGE_INSTFILES

|

||||

|

||||

; Finish page

|

||||

!define MUI_FINISHPAGE_SHOWREADME ""

|

||||

!define MUI_FINISHPAGE_SHOWREADME_NOTCHECKED

|

||||

!define MUI_FINISHPAGE_SHOWREADME_TEXT "Create Desktop Shortcut"

|

||||

!define MUI_FINISHPAGE_SHOWREADME_FUNCTION FinishPageAction

|

||||

|

||||

!define MUI_FINISHPAGE_RUN "$INSTDIR\Start Stable Diffusion UI.cmd"

|

||||

!insertmacro MUI_PAGE_FINISH

|

||||

|

||||

@ -201,8 +188,8 @@ Page custom MediaPackDialog

|

||||

;---------------------------------------------------------------------------------------------------------

|

||||

|

||||

Name "${PRODUCT_NAME} ${PRODUCT_VERSION}"

|

||||

OutFile "Install Easy Diffusion.exe"

|

||||

InstallDir "C:\EasyDiffusion\"

|

||||

OutFile "Install Stable Diffusion UI.exe"

|

||||

InstallDir "C:\Stable-Diffusion-UI\"

|

||||

InstallDirRegKey HKLM "${PRODUCT_DIR_REGKEY}" ""

|

||||

ShowInstDetails show

|

||||

|

||||

@ -213,42 +200,15 @@ Section "MainSection" SEC01

|

||||

File "..\CreativeML Open RAIL-M License"

|

||||

File "..\How to install and run.txt"

|

||||

File "..\LICENSE"

|

||||

File "..\scripts\Start Stable Diffusion UI.cmd"

|

||||

File /r "${EXISTING_INSTALLATION_DIR}\installer_files"

|

||||

File /r "${EXISTING_INSTALLATION_DIR}\profile"

|

||||

File /r "${EXISTING_INSTALLATION_DIR}\sd-ui-files"

|

||||

SetOutPath "$INSTDIR\installer_files"

|

||||

File "cyborg_flower_girl.ico"

|

||||

File "..\Start Stable Diffusion UI.cmd"

|

||||

SetOutPath "$INSTDIR\scripts"

|

||||

File "${EXISTING_INSTALLATION_DIR}\scripts\install_status.txt"

|

||||

File "..\scripts\bootstrap.bat"

|

||||

File "..\scripts\install_status.txt"

|

||||

File "..\scripts\on_env_start.bat"

|

||||

File "C:\windows\system32\curl.exe"

|

||||

CreateDirectory "$INSTDIR\models"

|

||||

CreateDirectory "$INSTDIR\models\stable-diffusion"

|

||||

CreateDirectory "$INSTDIR\models\gfpgan"

|

||||

CreateDirectory "$INSTDIR\models\realesrgan"

|

||||

CreateDirectory "$INSTDIR\models\vae"

|

||||

CreateDirectory "$SMPROGRAMS\Easy Diffusion"

|

||||

CreateShortCut "$SMPROGRAMS\Easy Diffusion\Easy Diffusion.lnk" "$INSTDIR\Start Stable Diffusion UI.cmd" "" "$INSTDIR\installer_files\cyborg_flower_girl.ico"

|

||||

|

||||

DetailPrint 'Downloading the Stable Diffusion 1.4 model...'

|

||||

NScurl::http get "https://huggingface.co/CompVis/stable-diffusion-v-1-4-original/resolve/main/sd-v1-4.ckpt" "$INSTDIR\models\stable-diffusion\sd-v1-4.ckpt" /CANCEL /INSIST /END

|

||||

|

||||

DetailPrint 'Downloading the GFPGAN model...'

|

||||

NScurl::http get "https://github.com/TencentARC/GFPGAN/releases/download/v1.3.4/GFPGANv1.4.pth" "$INSTDIR\models\gfpgan\GFPGANv1.4.pth" /CANCEL /INSIST /END

|

||||

|

||||

DetailPrint 'Downloading the RealESRGAN_x4plus model...'

|

||||

NScurl::http get "https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth" "$INSTDIR\models\realesrgan\RealESRGAN_x4plus.pth" /CANCEL /INSIST /END

|

||||

|

||||

DetailPrint 'Downloading the RealESRGAN_x4plus_anime model...'

|

||||

NScurl::http get "https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth" "$INSTDIR\models\realesrgan\RealESRGAN_x4plus_anime_6B.pth" /CANCEL /INSIST /END

|

||||

|

||||

DetailPrint 'Downloading the default VAE (sd-vae-ft-mse-original) model...'

|

||||

NScurl::http get "https://huggingface.co/stabilityai/sd-vae-ft-mse-original/resolve/main/vae-ft-mse-840000-ema-pruned.ckpt" "$INSTDIR\models\vae\vae-ft-mse-840000-ema-pruned.ckpt" /CANCEL /INSIST /END

|

||||

|

||||

DetailPrint 'Downloading the CLIP model (clip-vit-large-patch14)...'

|

||||

NScurl::http get "https://huggingface.co/openai/clip-vit-large-patch14/resolve/8d052a0f05efbaefbc9e8786ba291cfdf93e5bff/pytorch_model.bin" "$INSTDIR\profile\.cache\huggingface\hub\models--openai--clip-vit-large-patch14\snapshots\8d052a0f05efbaefbc9e8786ba291cfdf93e5bff\pytorch_model.bin" /CANCEL /INSIST /END

|

||||

|

||||

CreateDirectory "$INSTDIR\profile"

|

||||

CreateDirectory "$SMPROGRAMS\Stable Diffusion UI"

|

||||

CreateShortCut "$SMPROGRAMS\Stable Diffusion UI\Start Stable Diffusion UI.lnk" "$INSTDIR\Start Stable Diffusion UI.cmd"

|

||||

SectionEnd

|

||||

|

||||

;---------------------------------------------------------------------------------------------------------

|

||||

@ -294,7 +254,7 @@ Function .onInit

|

||||

|

||||

${If} $4 < "8000"

|

||||

MessageBox MB_OK|MB_ICONEXCLAMATION "Warning!$\n$\nYour system has less than 8GB of memory (RAM).$\n$\n\

|

||||

You can still try to install Easy Diffusion,$\nbut it might have problems to start, or run$\nvery slowly."

|

||||

You can still try to install Stable Diffusion UI,$\nbut it might have problems to start, or run$\nvery slowly."

|

||||

${EndIf}

|

||||

|

||||

FunctionEnd

|

||||

|

||||

@ -1,9 +0,0 @@

|

||||

// placeholder until a more formal and legal-sounding privacy policy document is written. but the information below is true.

|

||||

|

||||

This is a summary of whether Easy Diffusion uses your data or tracks you:

|

||||

* The short answer is - Easy Diffusion does *not* use your data, and does *not* track you.

|

||||

* Easy Diffusion does not send your prompts or usage or analytics to anyone. There is no tracking. We don't even know how many people use Easy Diffusion, let alone their prompts.

|

||||

* Easy Diffusion fetches updates to the code whenever it starts up. It does this by contacting GitHub directly, via SSL (secure connection). Only your computer and GitHub and [this repository](https://github.com/easydiffusion/easydiffusion) are involved, and no third party is involved. Some countries intercepts SSL connections, that's not something we can do much about. GitHub does *not* share statistics (even with me) about how many people fetched code updates.

|

||||

* Easy Diffusion fetches the models from huggingface.co and github.com, if they don't exist on your PC. For e.g. if the safety checker (NSFW) model doesn't exist, it'll try to download it.

|

||||

* Easy Diffusion fetches code packages from pypi.org, which is the standard hosting service for all Python projects. That's where packages installed via `pip install` are stored.

|

||||

* Occasionally, antivirus software are known to *incorrectly* flag and delete some model files, which will result in Easy Diffusion re-downloading `pytorch_model.bin`. This *incorrect deletion* affects other Stable Diffusion UIs as well, like Invoke AI - https://itch.io/post/7509488

|

||||

@ -3,6 +3,6 @@ Hi there,

|

||||

What you have downloaded is meant for the developers of this project, not for users.

|

||||

|

||||

If you only want to use the Stable Diffusion UI, you've downloaded the wrong file.

|

||||

Please download and follow the instructions at https://github.com/easydiffusion/easydiffusion#installation

|

||||

Please download and follow the instructions at https://github.com/cmdr2/stable-diffusion-ui#installation

|

||||

|

||||

Thanks

|

||||

143

README.md

@ -1,51 +1,40 @@

|

||||

# Easy Diffusion 3.0

|

||||

### The easiest way to install and use [Stable Diffusion](https://github.com/CompVis/stable-diffusion) on your computer.

|

||||

# Stable Diffusion UI

|

||||

### The easiest way to install and use [Stable Diffusion](https://github.com/CompVis/stable-diffusion) on your own computer. Does not require technical knowledge, does not require pre-installed software. 1-click install, powerful features, friendly community.

|

||||

|

||||

Does not require technical knowledge, does not require pre-installed software. 1-click install, powerful features, friendly community.

|

||||

[](https://discord.com/invite/u9yhsFmEkB) (for support, and development discussion) | [Troubleshooting guide for common problems](https://github.com/cmdr2/stable-diffusion-ui/wiki/Troubleshooting)

|

||||

|

||||

[Installation guide](#installation) | [Troubleshooting guide](https://github.com/easydiffusion/easydiffusion/wiki/Troubleshooting) | <sub>[](https://discord.com/invite/u9yhsFmEkB)</sub> <sup>(for support queries, and development discussions)</sup>

|

||||

### New:

|

||||

Experimental support for Stable Diffusion 2.0 is available in beta!

|

||||

|

||||

---

|

||||

|

||||

----

|

||||

|

||||

|

||||

# Installation

|

||||

# Step 1: Download and prepare the installer

|

||||

Click the download button for your operating system:

|

||||

|

||||

<p float="left">

|

||||

<a href="https://github.com/cmdr2/stable-diffusion-ui/releases/latest/download/Easy-Diffusion-Linux.zip"><img src="https://github.com/cmdr2/stable-diffusion-ui/raw/main/media/download-linux.png" width="200" /></a>

|

||||

<a href="https://github.com/cmdr2/stable-diffusion-ui/releases/latest/download/Easy-Diffusion-Mac.zip"><img src="https://github.com/cmdr2/stable-diffusion-ui/raw/main/media/download-mac.png" width="200" /></a>

|

||||

<a href="https://github.com/cmdr2/stable-diffusion-ui/releases/latest/download/Easy-Diffusion-Windows.exe"><img src="https://github.com/cmdr2/stable-diffusion-ui/raw/main/media/download-win.png" width="200" /></a>

|

||||

<a href="https://github.com/cmdr2/stable-diffusion-ui/releases/download/v2.4.13/stable-diffusion-ui-windows.zip"><img src="https://github.com/cmdr2/stable-diffusion-ui/raw/main/media/download-win.png" width="200" /></a>

|

||||

<a href="https://github.com/cmdr2/stable-diffusion-ui/releases/download/v2.4.13/stable-diffusion-ui-linux.zip"><img src="https://github.com/cmdr2/stable-diffusion-ui/raw/main/media/download-linux.png" width="200" /></a>

|

||||

</p>

|

||||

|

||||

**Hardware requirements:**

|

||||

- **Windows:** NVIDIA graphics card¹ (minimum 2 GB RAM), or run on your CPU.

|

||||

- **Linux:** NVIDIA¹ or AMD² graphics card (minimum 2 GB RAM), or run on your CPU.

|

||||

- **Mac:** M1 or M2, or run on your CPU.

|

||||

- Minimum 8 GB of system RAM.

|

||||

- Atleast 25 GB of space on the hard disk.

|

||||

## On Windows:

|

||||

1. Unzip/extract the folder `stable-diffusion-ui` which should be in your downloads folder, unless you changed your default downloads destination.

|

||||

2. Move the `stable-diffusion-ui` folder to your `C:` drive (or any other drive like `D:`, at the top root level). `C:\stable-diffusion-ui` or `D:\stable-diffusion-ui` as examples. This will avoid a common problem with Windows (file path length limits).

|

||||

## On Linux:

|

||||

1. Unzip/extract the folder `stable-diffusion-ui` which should be in your downloads folder, unless you changed your default downloads destination.

|

||||

2. Open a terminal window, and navigate to the `stable-diffusion-ui` directory.

|

||||

|

||||

¹) [CUDA Compute capability](https://en.wikipedia.org/wiki/CUDA#GPUs_supported) level of 3.7 or higher required.

|

||||

|

||||

²) ROCm 5.2 support required.

|

||||

# Step 2: Run the program

|

||||

## On Windows:

|

||||

Double-click `Start Stable Diffusion UI.cmd`.

|

||||

If Windows SmartScreen prevents you from running the program click `More info` and then `Run anyway`.

|

||||

## On Linux:

|

||||

Run `./start.sh` (or `bash start.sh`) in a terminal.

|

||||

|

||||

The installer will take care of whatever is needed. If you face any problems, you can join the friendly [Discord community](https://discord.com/invite/u9yhsFmEkB) and ask for assistance.

|

||||

|

||||

## On Windows:

|

||||

1. Run the downloaded `Easy-Diffusion-Windows.exe` file.

|

||||

2. Run `Easy Diffusion` once the installation finishes. You can also start from your Start Menu, or from your desktop (if you created a shortcut).

|

||||

# Step 3: There is no Step 3. It's that simple!

|

||||

|

||||

If Windows SmartScreen prevents you from running the program click `More info` and then `Run anyway`.

|

||||

|

||||

**Tip:** On Windows 10, please install at the top level in your drive, e.g. `C:\EasyDiffusion` or `D:\EasyDiffusion`. This will avoid a common problem with Windows 10 (file path length limits).

|

||||

|

||||

## On Linux/Mac:

|

||||

1. Unzip/extract the folder `easy-diffusion` which should be in your downloads folder, unless you changed your default downloads destination.

|

||||

2. Open a terminal window, and navigate to the `easy-diffusion` directory.

|

||||

3. Run `./start.sh` (or `bash start.sh`) in a terminal.

|

||||

|

||||

# To remove/uninstall:

|

||||

Just delete the `EasyDiffusion` folder to uninstall all the downloaded packages.

|

||||

**To Uninstall:** Just delete the `stable-diffusion-ui` folder to uninstall all the downloaded packages.

|

||||

|

||||

----

|

||||

|

||||

@ -55,48 +44,33 @@ Just delete the `EasyDiffusion` folder to uninstall all the downloaded packages.

|

||||

### User experience

|

||||

- **Hassle-free installation**: Does not require technical knowledge, does not require pre-installed software. Just download and run!

|

||||

- **Clutter-free UI**: A friendly and simple UI, while providing a lot of powerful features.

|

||||

- **Task Queue**: Queue up all your ideas, without waiting for the current task to finish.

|

||||

- **Intelligent Model Detection**: Automatically figures out the YAML config file to use for the chosen model (via a models database).

|

||||

- **Live Preview**: See the image as the AI is drawing it.

|

||||

- **Image Modifiers**: A library of *modifier tags* like *"Realistic"*, *"Pencil Sketch"*, *"ArtStation"* etc. Experiment with various styles quickly.

|

||||

- **Multiple Prompts File**: Queue multiple prompts by entering one prompt per line, or by running a text file.

|

||||

- **Save generated images to disk**: Save your images to your PC!

|

||||

- **UI Themes**: Customize the program to your liking.

|

||||

- **Searchable models dropdown**: organize your models into sub-folders, and search through them in the UI.

|

||||

|

||||

### Powerful image generation

|

||||

- **Supports**: "*Text to Image*", "*Image to Image*" and "*InPainting*"

|

||||

- **ControlNet**: For advanced control over the image, e.g. by setting the pose or drawing the outline for the AI to fill in.

|

||||

- **16 Samplers**: `PLMS`, `DDIM`, `DEIS`, `Heun`, `Euler`, `Euler Ancestral`, `DPM2`, `DPM2 Ancestral`, `LMS`, `DPM Solver`, `DPM++ 2s Ancestral`, `DPM++ 2m`, `DPM++ 2m SDE`, `DPM++ SDE`, `DDPM`, `UniPC`.

|

||||

- **Stable Diffusion XL and 2.1**: Generate higher-quality images using the latest Stable Diffusion XL models.

|

||||

- **Textual Inversion Embeddings**: For guiding the AI strongly towards a particular concept.

|

||||

### Image generation

|

||||

- **Supports**: "*Text to Image*" and "*Image to Image*".

|

||||

- **In-Painting**: Specify areas of your image to paint into.

|

||||

- **Simple Drawing Tool**: Draw basic images to guide the AI, without needing an external drawing program.

|

||||

- **Face Correction (GFPGAN)**

|

||||

- **Upscaling (RealESRGAN)**

|

||||

- **Loopback**: Use the output image as the input image for the next image task.

|

||||

- **Loopback**: Use the output image as the input image for the next img2img task.

|

||||

- **Negative Prompt**: Specify aspects of the image to *remove*.

|

||||

- **Attention/Emphasis**: `+` in the prompt increases the model's attention to enclosed words, and `-` decreases it. E.g. `apple++ falling from a tree`.

|

||||

- **Weighted Prompts**: Use weights for specific words in your prompt to change their importance, e.g. `(red)2.4 (dragon)1.2`.

|

||||

- **Attention/Emphasis**: () in the prompt increases the model's attention to enclosed words, and [] decreases it.

|

||||

- **Weighted Prompts**: Use weights for specific words in your prompt to change their importance, e.g. `red:2.4 dragon:1.2`.

|

||||

- **Prompt Matrix**: Quickly create multiple variations of your prompt, e.g. `a photograph of an astronaut riding a horse | illustration | cinematic lighting`.

|

||||

- **Prompt Set**: Quickly create multiple variations of your prompt, e.g. `a photograph of an astronaut on the {moon,earth}`

|

||||

- **Lots of Samplers**: ddim, plms, heun, euler, euler_a, dpm2, dpm2_a, lms.

|

||||

- **1-click Upscale/Face Correction**: Upscale or correct an image after it has been generated.

|

||||

- **Make Similar Images**: Click to generate multiple variations of a generated image.

|

||||

- **NSFW Setting**: A setting in the UI to control *NSFW content*.

|

||||

- **JPEG/PNG/WEBP output**: Multiple file formats.

|

||||

- **JPEG/PNG output**: Multiple file formats.

|

||||

|

||||

### Advanced features

|

||||

- **Custom Models**: Use your own `.ckpt` or `.safetensors` file, by placing it inside the `models/stable-diffusion` folder!

|

||||

- **Stable Diffusion XL and 2.1 support**

|

||||

- **Merge Models**

|

||||

- **Stable Diffusion 2.0 support (experimental)**: available in beta channel.

|

||||

- **Use custom VAE models**

|

||||

- **Textual Inversion Embeddings**

|

||||

- **ControlNet**

|

||||

- **Use custom GFPGAN models**

|

||||

- **UI Plugins**: Choose from a growing list of [community-generated UI plugins](https://github.com/easydiffusion/easydiffusion/wiki/UI-Plugins), or write your own plugin to add features to the project!

|

||||

- **Use pre-trained Hypernetworks**

|

||||

- **UI Plugins**: Choose from a growing list of [community-generated UI plugins](https://github.com/cmdr2/stable-diffusion-ui/wiki/UI-Plugins), or write your own plugin to add features to the project!

|

||||

|

||||

### Performance and security

|

||||

- **Fast**: Creates a 512x512 image with euler_a in 5 seconds, on an NVIDIA 3060 12GB.

|

||||

- **Low Memory Usage**: Create 512x512 images with less than 2 GB of GPU RAM, and 768x768 images with less than 3 GB of GPU RAM!

|

||||

- **Low Memory Usage**: Creates 512x512 images with less than 4GB of GPU RAM!

|

||||

- **Use CPU setting**: If you don't have a compatible graphics card, but still want to run it on your CPU.

|

||||

- **Multi-GPU support**: Automatically spreads your tasks across multiple GPUs (if available), for faster performance!

|

||||

- **Auto scan for malicious models**: Uses picklescan to prevent malicious models.

|

||||

@ -104,36 +78,55 @@ Just delete the `EasyDiffusion` folder to uninstall all the downloaded packages.

|

||||

- **Auto-updater**: Gets you the latest improvements and bug-fixes to a rapidly evolving project.

|

||||

- **Developer Console**: A developer-mode for those who want to modify their Stable Diffusion code, and edit the conda environment.

|

||||

|

||||

### Usability:

|

||||

- **Live Preview**: See the image as the AI is drawing it.

|

||||

- **Task Queue**: Queue up all your ideas, without waiting for the current task to finish.

|

||||

- **Image Modifiers**: A library of *modifier tags* like *"Realistic"*, *"Pencil Sketch"*, *"ArtStation"* etc. Experiment with various styles quickly.

|

||||

- **Multiple Prompts File**: Queue multiple prompts by entering one prompt per line, or by running a text file.

|

||||

- **Save generated images to disk**: Save your images to your PC!

|

||||

- **UI Themes**: Customize the program to your liking.

|

||||

|

||||

**(and a lot more)**

|

||||

|

||||

----

|

||||

|

||||

## Easy for new users, powerful features for advanced users:

|

||||

|

||||

## Easy for new users:

|

||||

|

||||

|

||||

## Powerful features for advanced users:

|

||||

|

||||

|

||||

## Live Preview

|

||||

Useful for judging (and stopping) an image quickly, without waiting for it to finish rendering.

|

||||

|

||||

|

||||

|

||||

## Task Queue

|

||||

|

||||

|

||||

|

||||

# System Requirements

|

||||

1. Windows 10/11, or Linux. Experimental support for Mac is coming soon.

|

||||

2. An NVIDIA graphics card, preferably with 4GB or more of VRAM. If you don't have a compatible graphics card, it'll automatically run in the slower "CPU Mode".

|

||||

3. Minimum 8 GB of RAM and 25GB of disk space.

|

||||

|

||||

You don't need to install or struggle with Python, Anaconda, Docker etc. The installer will take care of whatever is needed.

|

||||

|

||||

----

|

||||

|

||||

# How to use?

|

||||

Please refer to our [guide](https://github.com/easydiffusion/easydiffusion/wiki/How-to-Use) to understand how to use the features in this UI.

|

||||

Please refer to our [guide](https://github.com/cmdr2/stable-diffusion-ui/wiki/How-to-Use) to understand how to use the features in this UI.

|

||||

|

||||

# Bugs reports and code contributions welcome

|

||||

If there are any problems or suggestions, please feel free to ask on the [discord server](https://discord.com/invite/u9yhsFmEkB) or [file an issue](https://github.com/easydiffusion/easydiffusion/issues).

|

||||

If there are any problems or suggestions, please feel free to ask on the [discord server](https://discord.com/invite/u9yhsFmEkB) or [file an issue](https://github.com/cmdr2/stable-diffusion-ui/issues).

|

||||

|

||||

We could really use help on these aspects (click to view tasks that need your help):

|

||||

* [User Interface](https://github.com/users/cmdr2/projects/1/views/1)

|

||||

* [Engine](https://github.com/users/cmdr2/projects/3/views/1)

|

||||

* [Installer](https://github.com/users/cmdr2/projects/4/views/1)

|

||||

* [Documentation](https://github.com/users/cmdr2/projects/5/views/1)

|

||||

|

||||

If you have any code contributions in mind, please feel free to say Hi to us on the [discord server](https://discord.com/invite/u9yhsFmEkB). We use the Discord server for development-related discussions, and for helping users.

|

||||

|

||||

# Credits

|

||||

* Stable Diffusion: https://github.com/Stability-AI/stablediffusion

|

||||

* CodeFormer: https://github.com/sczhou/CodeFormer (license: https://github.com/sczhou/CodeFormer/blob/master/LICENSE)

|

||||

* GFPGAN: https://github.com/TencentARC/GFPGAN

|

||||

* RealESRGAN: https://github.com/xinntao/Real-ESRGAN

|

||||

* k-diffusion: https://github.com/crowsonkb/k-diffusion

|

||||

* Code contributors and artists on the cmdr2 UI: https://github.com/cmdr2/stable-diffusion-ui and Discord (https://discord.com/invite/u9yhsFmEkB)

|

||||

* Lots of contributors on the internet

|

||||

|

||||

# Disclaimer

|

||||

The authors of this project are not responsible for any content generated using this interface.

|

||||

|

||||

|

||||

@ -2,7 +2,7 @@

|

||||

|

||||

@echo "Hi there, what you are running is meant for the developers of this project, not for users." & echo.

|

||||

@echo "If you only want to use the Stable Diffusion UI, you've downloaded the wrong file."

|

||||

@echo "Please download and follow the instructions at https://github.com/easydiffusion/easydiffusion#installation" & echo.

|

||||

@echo "Please download and follow the instructions at https://github.com/cmdr2/stable-diffusion-ui#installation" & echo.

|

||||

@echo "If you are actually a developer of this project, please type Y and press enter" & echo.

|

||||

|

||||

set /p answer=Are you a developer of this project (Y/N)?

|

||||

@ -15,7 +15,6 @@ mkdir dist\win\stable-diffusion-ui\scripts

|

||||

|

||||

copy scripts\on_env_start.bat dist\win\stable-diffusion-ui\scripts\

|

||||

copy scripts\bootstrap.bat dist\win\stable-diffusion-ui\scripts\

|

||||

copy scripts\config.yaml.sample dist\win\stable-diffusion-ui\scripts\config.yaml

|

||||

copy "scripts\Start Stable Diffusion UI.cmd" dist\win\stable-diffusion-ui\

|

||||

copy LICENSE dist\win\stable-diffusion-ui\

|

||||

copy "CreativeML Open RAIL-M License" dist\win\stable-diffusion-ui\

|

||||

|

||||

3

build.sh

@ -2,7 +2,7 @@

|

||||

|

||||

printf "Hi there, what you are running is meant for the developers of this project, not for users.\n\n"

|

||||

printf "If you only want to use the Stable Diffusion UI, you've downloaded the wrong file.\n"

|

||||

printf "Please download and follow the instructions at https://github.com/easydiffusion/easydiffusion#installation \n\n"

|

||||

printf "Please download and follow the instructions at https://github.com/cmdr2/stable-diffusion-ui#installation\n\n"

|

||||

printf "If you are actually a developer of this project, please type Y and press enter\n\n"

|

||||

|

||||

read -p "Are you a developer of this project (Y/N) " yn

|

||||

@ -29,7 +29,6 @@ mkdir -p dist/linux-mac/stable-diffusion-ui/scripts

|

||||

cp scripts/on_env_start.sh dist/linux-mac/stable-diffusion-ui/scripts/

|

||||

cp scripts/bootstrap.sh dist/linux-mac/stable-diffusion-ui/scripts/

|

||||

cp scripts/functions.sh dist/linux-mac/stable-diffusion-ui/scripts/

|

||||

cp scripts/config.yaml.sample dist/linux-mac/stable-diffusion-ui/scripts/config.yaml

|

||||

cp scripts/start.sh dist/linux-mac/stable-diffusion-ui/

|

||||

cp LICENSE dist/linux-mac/stable-diffusion-ui/

|

||||

cp "CreativeML Open RAIL-M License" dist/linux-mac/stable-diffusion-ui/

|

||||

|

||||

|

Before Width: | Height: | Size: 12 KiB |

@ -1,9 +0,0 @@

|

||||

{

|

||||

"scripts": {

|

||||

"prettier-fix": "npx prettier --write \"./**/*.js\"",

|

||||

"prettier-check": "npx prettier --check \"./**/*.js\""

|

||||

},

|

||||

"devDependencies": {

|

||||

"prettier": "^1.19.1"

|

||||

}

|

||||

}

|

||||

BIN

patch.patch

@ -2,8 +2,6 @@

|

||||

|

||||

echo "Opening Stable Diffusion UI - Developer Console.." & echo.

|

||||

|

||||

cd /d %~dp0

|

||||

|

||||

set PATH=C:\Windows\System32;%PATH%

|

||||

|

||||

@rem set legacy and new installer's PATH, if they exist

|

||||

@ -23,8 +21,6 @@ call git --version

|

||||

call where conda

|

||||

call conda --version

|

||||

|

||||

echo.

|

||||

echo COMSPEC=%COMSPEC%

|

||||

echo.

|

||||

|

||||

@rem activate the legacy environment (if present) and set PYTHONPATH

|

||||

@ -41,10 +37,6 @@ call python --version

|

||||

|

||||

echo PYTHONPATH=%PYTHONPATH%

|

||||

|

||||

if exist "%cd%\profile" (

|

||||

set HF_HOME=%cd%\profile\.cache\huggingface

|

||||

)

|

||||

|

||||

@rem done

|

||||

echo.

|

||||

|

||||

|

||||

@ -1,45 +1,27 @@

|

||||

@echo off

|

||||

|

||||

cd /d %~dp0

|

||||

echo Install dir: %~dp0

|

||||

|

||||

set PATH=C:\Windows\System32;%PATH%

|

||||

set PYTHONHOME=

|

||||

|

||||

if exist "on_sd_start.bat" (

|

||||

echo ================================================================================

|

||||

echo.

|

||||

echo !!!! WARNING !!!!

|

||||

echo.

|

||||

echo It looks like you're trying to run the installation script from a source code

|

||||

echo download. This will not work.

|

||||

echo.

|

||||

echo Recommended: Please close this window and download the installer from

|

||||

echo https://stable-diffusion-ui.github.io/docs/installation/

|

||||

echo.

|

||||

echo ================================================================================

|

||||

echo.

|

||||

pause

|

||||

exit /b

|

||||

)

|

||||

|

||||

@rem set legacy installer's PATH, if it exists

|

||||

if exist "installer" set PATH=%cd%\installer;%cd%\installer\Library\bin;%cd%\installer\Scripts;%cd%\installer\Library\usr\bin;%PATH%

|

||||

|

||||

@rem Setup the packages required for the installer

|

||||

call scripts\bootstrap.bat

|

||||

|

||||

@rem set new installer's PATH, if it downloaded any packages

|

||||

if exist "installer_files\env" set PATH=%cd%\installer_files\env;%cd%\installer_files\env\Library\bin;%cd%\installer_files\env\Scripts;%cd%\installer_files\Library\usr\bin;%PATH%

|

||||

|

||||

set PYTHONPATH=%cd%\installer;%cd%\installer_files\env

|

||||

|

||||

@rem Test the core requirements

|

||||

@rem Test the bootstrap

|

||||

call where git

|

||||

call git --version

|

||||

|

||||

call where conda

|

||||

call conda --version

|

||||

echo .

|

||||

echo COMSPEC=%COMSPEC%

|

||||

|

||||

@rem Download the rest of the installer and UI

|

||||

call scripts\on_env_start.bat

|

||||

|

||||

@pause

|

||||

|

||||

@ -1,5 +1,4 @@

|

||||

@echo off

|

||||

setlocal enabledelayedexpansion

|

||||

|

||||

@rem This script will install git and conda (if not found on the PATH variable)

|

||||

@rem using micromamba (an 8mb static-linked single-file binary, conda replacement).

|

||||

@ -11,7 +10,7 @@ setlocal enabledelayedexpansion

|

||||

set MAMBA_ROOT_PREFIX=%cd%\installer_files\mamba

|

||||

set INSTALL_ENV_DIR=%cd%\installer_files\env

|

||||

set LEGACY_INSTALL_ENV_DIR=%cd%\installer

|

||||

set MICROMAMBA_DOWNLOAD_URL=https://github.com/easydiffusion/easydiffusion/releases/download/v1.1/micromamba.exe

|

||||

set MICROMAMBA_DOWNLOAD_URL=https://github.com/cmdr2/stable-diffusion-ui/releases/download/v1.1/micromamba.exe

|

||||

set umamba_exists=F

|

||||

|

||||

set OLD_APPDATA=%APPDATA%

|

||||

@ -29,10 +28,10 @@ if not exist "%LEGACY_INSTALL_ENV_DIR%\etc\profile.d\conda.sh" (

|

||||

)

|

||||

|

||||

call git --version >.tmp1 2>.tmp2

|

||||

if "!ERRORLEVEL!" NEQ "0" set PACKAGES_TO_INSTALL=%PACKAGES_TO_INSTALL% git

|

||||

if "%ERRORLEVEL%" NEQ "0" set PACKAGES_TO_INSTALL=%PACKAGES_TO_INSTALL% git

|

||||

|

||||

call "%MAMBA_ROOT_PREFIX%\micromamba.exe" --version >.tmp1 2>.tmp2

|

||||

if "!ERRORLEVEL!" EQU "0" set umamba_exists=T

|

||||

if "%ERRORLEVEL%" EQU "0" set umamba_exists=T

|

||||

|

||||

@rem (if necessary) install git and conda into a contained environment

|

||||

if "%PACKAGES_TO_INSTALL%" NEQ "" (

|

||||

@ -43,7 +42,7 @@ if "%PACKAGES_TO_INSTALL%" NEQ "" (

|

||||

mkdir "%MAMBA_ROOT_PREFIX%"

|

||||

call curl -Lk "%MICROMAMBA_DOWNLOAD_URL%" > "%MAMBA_ROOT_PREFIX%\micromamba.exe"

|

||||

|

||||

if "!ERRORLEVEL!" NEQ "0" (

|

||||

if "%ERRORLEVEL%" NEQ "0" (

|

||||

echo "There was a problem downloading micromamba. Cannot continue."

|

||||

pause

|

||||

exit /b

|

||||

|

||||

@ -21,16 +21,9 @@ OS_ARCH=$(uname -m)

|

||||

case "${OS_ARCH}" in

|

||||

x86_64*) OS_ARCH="64";;

|

||||

arm64*) OS_ARCH="arm64";;

|

||||

aarch64*) OS_ARCH="arm64";;

|

||||

*) echo "Unknown system architecture: $OS_ARCH! This script runs only on x86_64 or arm64" && exit

|

||||

esac

|

||||

|

||||

if ! which curl; then fail "'curl' not found. Please install curl."; fi

|

||||

if ! which tar; then fail "'tar' not found. Please install tar."; fi

|

||||