mirror of

https://github.com/nushell/nushell.git

synced 2025-08-09 02:14:56 +02:00

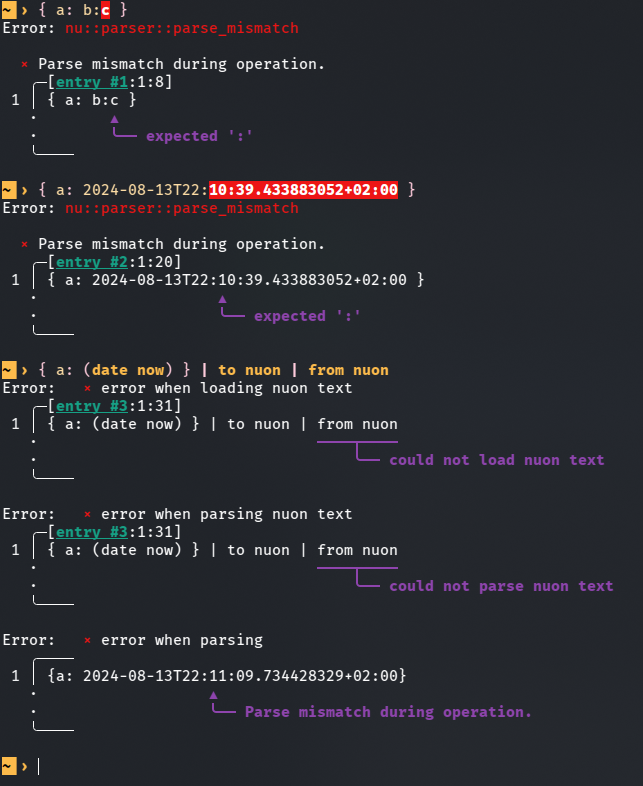

Fix parsing record values containing colons (#13413)

This PR is an attempt to fix #8257 and fix #10985 (which is duplicate-ish) # Description The parser currently doesn't know how to deal with colons appearing while lexing whitespace-terminated tokens specifying a record value. Most notably, this means you can't use datetime literals in record value position (and as a consequence, `| to nuon | from nuon` roundtrips can fail), but it also means that bare words containing colons cause a non-useful error message.  `parser::parse_record` calls `lex::lex` with the `:` colon character in the `special_tokens` argument. This allows colons to terminate record keys, but as a side effect, it also causes colons to terminate record *values*. I added a new function `lex::lex_n_tokens`, which allows the caller to drive the lexing process more explicitly, and used it in `parser::parse_record` to let colons terminate record keys while not giving them special treatment when appearing in record values. This PR description previously said: *Another approach suggested in one of the issues was to support an additional datetime literal format that doesn't require colons. I like that that wouldn't require new `lex::lex_internal` behaviour, but an advantage of my approach is that it also newly allows for string record values given as bare words containing colons. I think this eliminates another possible source of confusion.* It was determined that this is undesirable, and in the current state of this PR, bare word record values with colons are rejected explicitly. The better error message is still a win. # User-Facing Changes In addition to the above, this PR also disables the use of "special" (non-item) tokens in record key and value position, and the use of a single bare `:` as a record key. Examples of behaviour *before* this PR: ```nu { a: b } # Valid, same as { 'a': 'b' } { a: b:c } # Error: expected ':' { a: 2024-08-13T22:11:09 } # Error: expected ':' { :: 1 } # Valid, same as { ':': 1 } { ;: 1 } # Valid, same as { ';': 1 } { a: || } # Valid, same as { 'a': '||' } ``` Examples of behaviour *after* this PR: ```nu { a: b } # (Unchanged) Valid, same as { 'a': 'b' } { a: b:c } # Error: colon in bare word specifying record value { a: 2024-08-13T22:11:09 } # Valid, same as { a: (2024-08-13T22:11:09) } { :: 1 } # Error: colon in bare word specifying record key { ;: 1 } # Error: expected item in record key position { a: || } # Error: expected item in record value position ``` # Tests + Formatting I added tests, but I'm not sure if they're sufficient and in the right place. # After Submitting I don't think documentation changes are needed for this, but please let me know if you disagree.

This commit is contained in:

@ -447,14 +447,61 @@ pub fn lex_signature(

|

||||

special_tokens: &[u8],

|

||||

skip_comment: bool,

|

||||

) -> (Vec<Token>, Option<ParseError>) {

|

||||

lex_internal(

|

||||

let mut state = LexState {

|

||||

input,

|

||||

output: Vec::new(),

|

||||

error: None,

|

||||

span_offset,

|

||||

};

|

||||

lex_internal(

|

||||

&mut state,

|

||||

additional_whitespace,

|

||||

special_tokens,

|

||||

skip_comment,

|

||||

true,

|

||||

)

|

||||

None,

|

||||

);

|

||||

(state.output, state.error)

|

||||

}

|

||||

|

||||

#[derive(Debug)]

|

||||

pub struct LexState<'a> {

|

||||

pub input: &'a [u8],

|

||||

pub output: Vec<Token>,

|

||||

pub error: Option<ParseError>,

|

||||

pub span_offset: usize,

|

||||

}

|

||||

|

||||

/// Lex until the output is `max_tokens` longer than before the call, or until the input is exhausted.

|

||||

/// The return value indicates how many tokens the call added to / removed from the output.

|

||||

///

|

||||

/// The behaviour here is non-obvious when `additional_whitespace` doesn't include newline:

|

||||

/// If you pass a `state` where the last token in the output is an Eol, this might *remove* tokens.

|

||||

pub fn lex_n_tokens(

|

||||

state: &mut LexState,

|

||||

additional_whitespace: &[u8],

|

||||

special_tokens: &[u8],

|

||||

skip_comment: bool,

|

||||

max_tokens: usize,

|

||||

) -> isize {

|

||||

let n_tokens = state.output.len();

|

||||

lex_internal(

|

||||

state,

|

||||

additional_whitespace,

|

||||

special_tokens,

|

||||

skip_comment,

|

||||

false,

|

||||

Some(max_tokens),

|

||||

);

|

||||

// If this lex_internal call reached the end of the input, there may now be fewer tokens

|

||||

// in the output than before.

|

||||

let tokens_n_diff = (state.output.len() as isize) - (n_tokens as isize);

|

||||

let next_offset = state.output.last().map(|token| token.span.end);

|

||||

if let Some(next_offset) = next_offset {

|

||||

state.input = &state.input[next_offset - state.span_offset..];

|

||||

state.span_offset = next_offset;

|

||||

}

|

||||

tokens_n_diff

|

||||

}

|

||||

|

||||

pub fn lex(

|

||||

@ -464,33 +511,43 @@ pub fn lex(

|

||||

special_tokens: &[u8],

|

||||

skip_comment: bool,

|

||||

) -> (Vec<Token>, Option<ParseError>) {

|

||||

lex_internal(

|

||||

let mut state = LexState {

|

||||

input,

|

||||

output: Vec::new(),

|

||||

error: None,

|

||||

span_offset,

|

||||

};

|

||||

lex_internal(

|

||||

&mut state,

|

||||

additional_whitespace,

|

||||

special_tokens,

|

||||

skip_comment,

|

||||

false,

|

||||

)

|

||||

None,

|

||||

);

|

||||

(state.output, state.error)

|

||||

}

|

||||

|

||||

fn lex_internal(

|

||||

input: &[u8],

|

||||

span_offset: usize,

|

||||

state: &mut LexState,

|

||||

additional_whitespace: &[u8],

|

||||

special_tokens: &[u8],

|

||||

skip_comment: bool,

|

||||

// within signatures we want to treat `<` and `>` specially

|

||||

in_signature: bool,

|

||||

) -> (Vec<Token>, Option<ParseError>) {

|

||||

let mut error = None;

|

||||

max_tokens: Option<usize>,

|

||||

) {

|

||||

let initial_output_len = state.output.len();

|

||||

|

||||

let mut curr_offset = 0;

|

||||

|

||||

let mut output = vec![];

|

||||

let mut is_complete = true;

|

||||

|

||||

while let Some(c) = input.get(curr_offset) {

|

||||

while let Some(c) = state.input.get(curr_offset) {

|

||||

if max_tokens

|

||||

.is_some_and(|max_tokens| state.output.len() >= initial_output_len + max_tokens)

|

||||

{

|

||||

break;

|

||||

}

|

||||

let c = *c;

|

||||

if c == b'|' {

|

||||

// If the next character is `|`, it's either `|` or `||`.

|

||||

@ -499,13 +556,13 @@ fn lex_internal(

|

||||

curr_offset += 1;

|

||||

|

||||

// If the next character is `|`, we're looking at a `||`.

|

||||

if let Some(c) = input.get(curr_offset) {

|

||||

if let Some(c) = state.input.get(curr_offset) {

|

||||

if *c == b'|' {

|

||||

let idx = curr_offset;

|

||||

curr_offset += 1;

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::PipePipe,

|

||||

Span::new(span_offset + prev_idx, span_offset + idx + 1),

|

||||

Span::new(state.span_offset + prev_idx, state.span_offset + idx + 1),

|

||||

));

|

||||

continue;

|

||||

}

|

||||

@ -515,12 +572,12 @@ fn lex_internal(

|

||||

|

||||

// Before we push, check to see if the previous character was a newline.

|

||||

// If so, then this is a continuation of the previous line

|

||||

if let Some(prev) = output.last_mut() {

|

||||

if let Some(prev) = state.output.last_mut() {

|

||||

match prev.contents {

|

||||

TokenContents::Eol => {

|

||||

*prev = Token::new(

|

||||

TokenContents::Pipe,

|

||||

Span::new(span_offset + idx, span_offset + idx + 1),

|

||||

Span::new(state.span_offset + idx, state.span_offset + idx + 1),

|

||||

);

|

||||

// And this is a continuation of the previous line if previous line is a

|

||||

// comment line (combined with EOL + Comment)

|

||||

@ -528,12 +585,12 @@ fn lex_internal(

|

||||

// Initially, the last one token is TokenContents::Pipe, we don't need to

|

||||

// check it, so the beginning offset is 2.

|

||||

let mut offset = 2;

|

||||

while output.len() > offset {

|

||||

let index = output.len() - offset;

|

||||

if output[index].contents == TokenContents::Comment

|

||||

&& output[index - 1].contents == TokenContents::Eol

|

||||

while state.output.len() > offset {

|

||||

let index = state.output.len() - offset;

|

||||

if state.output[index].contents == TokenContents::Comment

|

||||

&& state.output[index - 1].contents == TokenContents::Eol

|

||||

{

|

||||

output.remove(index - 1);

|

||||

state.output.remove(index - 1);

|

||||

offset += 1;

|

||||

} else {

|

||||

break;

|

||||

@ -541,16 +598,16 @@ fn lex_internal(

|

||||

}

|

||||

}

|

||||

_ => {

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::Pipe,

|

||||

Span::new(span_offset + idx, span_offset + idx + 1),

|

||||

Span::new(state.span_offset + idx, state.span_offset + idx + 1),

|

||||

));

|

||||

}

|

||||

}

|

||||

} else {

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::Pipe,

|

||||

Span::new(span_offset + idx, span_offset + idx + 1),

|

||||

Span::new(state.span_offset + idx, state.span_offset + idx + 1),

|

||||

));

|

||||

}

|

||||

|

||||

@ -558,17 +615,17 @@ fn lex_internal(

|

||||

} else if c == b';' {

|

||||

// If the next character is a `;`, we're looking at a semicolon token.

|

||||

|

||||

if !is_complete && error.is_none() {

|

||||

error = Some(ParseError::ExtraTokens(Span::new(

|

||||

if !is_complete && state.error.is_none() {

|

||||

state.error = Some(ParseError::ExtraTokens(Span::new(

|

||||

curr_offset,

|

||||

curr_offset + 1,

|

||||

)));

|

||||

}

|

||||

let idx = curr_offset;

|

||||

curr_offset += 1;

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::Semicolon,

|

||||

Span::new(span_offset + idx, span_offset + idx + 1),

|

||||

Span::new(state.span_offset + idx, state.span_offset + idx + 1),

|

||||

));

|

||||

} else if c == b'\r' {

|

||||

// Ignore a stand-alone carriage return

|

||||

@ -578,9 +635,9 @@ fn lex_internal(

|

||||

let idx = curr_offset;

|

||||

curr_offset += 1;

|

||||

if !additional_whitespace.contains(&c) {

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::Eol,

|

||||

Span::new(span_offset + idx, span_offset + idx + 1),

|

||||

Span::new(state.span_offset + idx, state.span_offset + idx + 1),

|

||||

));

|

||||

}

|

||||

} else if c == b'#' {

|

||||

@ -588,12 +645,12 @@ fn lex_internal(

|

||||

// comment. The comment continues until the next newline.

|

||||

let mut start = curr_offset;

|

||||

|

||||

while let Some(input) = input.get(curr_offset) {

|

||||

while let Some(input) = state.input.get(curr_offset) {

|

||||

if *input == b'\n' {

|

||||

if !skip_comment {

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::Comment,

|

||||

Span::new(span_offset + start, span_offset + curr_offset),

|

||||

Span::new(state.span_offset + start, state.span_offset + curr_offset),

|

||||

));

|

||||

}

|

||||

start = curr_offset;

|

||||

@ -604,9 +661,9 @@ fn lex_internal(

|

||||

}

|

||||

}

|

||||

if start != curr_offset && !skip_comment {

|

||||

output.push(Token::new(

|

||||

state.output.push(Token::new(

|

||||

TokenContents::Comment,

|

||||

Span::new(span_offset + start, span_offset + curr_offset),

|

||||

Span::new(state.span_offset + start, state.span_offset + curr_offset),

|

||||

));

|

||||

}

|

||||

} else if c == b' ' || c == b'\t' || additional_whitespace.contains(&c) {

|

||||

@ -614,21 +671,20 @@ fn lex_internal(

|

||||

curr_offset += 1;

|

||||

} else {

|

||||

let (token, err) = lex_item(

|

||||

input,

|

||||

state.input,

|

||||

&mut curr_offset,

|

||||

span_offset,

|

||||

state.span_offset,

|

||||

additional_whitespace,

|

||||

special_tokens,

|

||||

in_signature,

|

||||

);

|

||||

if error.is_none() {

|

||||

error = err;

|

||||

if state.error.is_none() {

|

||||

state.error = err;

|

||||

}

|

||||

is_complete = true;

|

||||

output.push(token);

|

||||

state.output.push(token);

|

||||

}

|

||||

}

|

||||

(output, error)

|

||||

}

|

||||

|

||||

/// True if this the start of a redirection. Does not match `>>` or `>|` forms.

|

||||

|

||||

@ -16,7 +16,7 @@ pub use flatten::{

|

||||

flatten_block, flatten_expression, flatten_pipeline, flatten_pipeline_element, FlatShape,

|

||||

};

|

||||

pub use known_external::KnownExternal;

|

||||

pub use lex::{lex, lex_signature, Token, TokenContents};

|

||||

pub use lex::{lex, lex_n_tokens, lex_signature, LexState, Token, TokenContents};

|

||||

pub use lite_parser::{lite_parse, LiteBlock, LiteCommand};

|

||||

pub use nu_protocol::parser_path::*;

|

||||

pub use parse_keywords::*;

|

||||

|

||||

@ -1,5 +1,5 @@

|

||||

use crate::{

|

||||

lex::{is_assignment_operator, lex, lex_signature},

|

||||

lex::{is_assignment_operator, lex, lex_n_tokens, lex_signature, LexState},

|

||||

lite_parser::{lite_parse, LiteCommand, LitePipeline, LiteRedirection, LiteRedirectionTarget},

|

||||

parse_keywords::*,

|

||||

parse_patterns::parse_pattern,

|

||||

@ -5650,6 +5650,49 @@ pub fn parse_builtin_commands(

|

||||

}

|

||||

}

|

||||

|

||||

fn check_record_key_or_value(

|

||||

working_set: &StateWorkingSet,

|

||||

expr: &Expression,

|

||||

position: &str,

|

||||

) -> Option<ParseError> {

|

||||

let bareword_error = |string_value: &Expression| {

|

||||

working_set

|

||||

.get_span_contents(string_value.span)

|

||||

.iter()

|

||||

.find_position(|b| **b == b':')

|

||||

.map(|(i, _)| {

|

||||

let colon_position = i + string_value.span.start;

|

||||

ParseError::InvalidLiteral(

|

||||

"colon".to_string(),

|

||||

format!("bare word specifying record {}", position),

|

||||

Span::new(colon_position, colon_position + 1),

|

||||

)

|

||||

})

|

||||

};

|

||||

let value_span = working_set.get_span_contents(expr.span);

|

||||

match expr.expr {

|

||||

Expr::String(_) => {

|

||||

if ![b'"', b'\'', b'`'].contains(&value_span[0]) {

|

||||

bareword_error(expr)

|

||||

} else {

|

||||

None

|

||||

}

|

||||

}

|

||||

Expr::StringInterpolation(ref expressions) => {

|

||||

if value_span[0] != b'$' {

|

||||

expressions

|

||||

.iter()

|

||||

.filter(|expr| matches!(expr.expr, Expr::String(_)))

|

||||

.filter_map(bareword_error)

|

||||

.next()

|

||||

} else {

|

||||

None

|

||||

}

|

||||

}

|

||||

_ => None,

|

||||

}

|

||||

}

|

||||

|

||||

pub fn parse_record(working_set: &mut StateWorkingSet, span: Span) -> Expression {

|

||||

let bytes = working_set.get_span_contents(span);

|

||||

|

||||

@ -5670,9 +5713,32 @@ pub fn parse_record(working_set: &mut StateWorkingSet, span: Span) -> Expression

|

||||

}

|

||||

|

||||

let inner_span = Span::new(start, end);

|

||||

let source = working_set.get_span_contents(inner_span);

|

||||

|

||||

let (tokens, err) = lex(source, start, &[b'\n', b'\r', b','], &[b':'], true);

|

||||

let mut lex_state = LexState {

|

||||

input: working_set.get_span_contents(inner_span),

|

||||

output: Vec::new(),

|

||||

error: None,

|

||||

span_offset: start,

|

||||

};

|

||||

let mut lex_n = |additional_whitespace, special_tokens, max_tokens| {

|

||||

lex_n_tokens(

|

||||

&mut lex_state,

|

||||

additional_whitespace,

|

||||

special_tokens,

|

||||

true,

|

||||

max_tokens,

|

||||

)

|

||||

};

|

||||

loop {

|

||||

if lex_n(&[b'\n', b'\r', b','], &[b':'], 2) < 2 {

|

||||

break;

|

||||

};

|

||||

if lex_n(&[b'\n', b'\r', b','], &[], 1) < 1 {

|

||||

break;

|

||||

};

|

||||

}

|

||||

let (tokens, err) = (lex_state.output, lex_state.error);

|

||||

|

||||

if let Some(err) = err {

|

||||

working_set.error(err);

|

||||

}

|

||||

@ -5716,7 +5782,22 @@ pub fn parse_record(working_set: &mut StateWorkingSet, span: Span) -> Expression

|

||||

));

|

||||

} else {

|

||||

// Normal key-value pair

|

||||

let field = parse_value(working_set, curr_span, &SyntaxShape::Any);

|

||||

let field_token = &tokens[idx];

|

||||

let field = if field_token.contents != TokenContents::Item {

|

||||

working_set.error(ParseError::Expected(

|

||||

"item in record key position",

|

||||

Span::new(field_token.span.start, field_token.span.end),

|

||||

));

|

||||

garbage(working_set, curr_span)

|

||||

} else {

|

||||

let field = parse_value(working_set, curr_span, &SyntaxShape::Any);

|

||||

if let Some(error) = check_record_key_or_value(working_set, &field, "key") {

|

||||

working_set.error(error);

|

||||

garbage(working_set, field.span)

|

||||

} else {

|

||||

field

|

||||

}

|

||||

};

|

||||

|

||||

idx += 1;

|

||||

if idx == tokens.len() {

|

||||

@ -5761,7 +5842,26 @@ pub fn parse_record(working_set: &mut StateWorkingSet, span: Span) -> Expression

|

||||

));

|

||||

break;

|

||||

}

|

||||

let value = parse_value(working_set, tokens[idx].span, &SyntaxShape::Any);

|

||||

|

||||

let value_token = &tokens[idx];

|

||||

let value = if value_token.contents != TokenContents::Item {

|

||||

working_set.error(ParseError::Expected(

|

||||

"item in record value position",

|

||||

Span::new(value_token.span.start, value_token.span.end),

|

||||

));

|

||||

garbage(

|

||||

working_set,

|

||||

Span::new(value_token.span.start, value_token.span.end),

|

||||

)

|

||||

} else {

|

||||

let value = parse_value(working_set, tokens[idx].span, &SyntaxShape::Any);

|

||||

if let Some(parse_error) = check_record_key_or_value(working_set, &value, "value") {

|

||||

working_set.error(parse_error);

|

||||

garbage(working_set, value.span)

|

||||

} else {

|

||||

value

|

||||

}

|

||||

};

|

||||

idx += 1;

|

||||

|

||||

if let Some(field) = field.as_string() {

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

use nu_parser::{lex, lex_signature, Token, TokenContents};

|

||||

use nu_parser::{lex, lex_n_tokens, lex_signature, LexState, Token, TokenContents};

|

||||

use nu_protocol::{ParseError, Span};

|

||||

|

||||

#[test]

|

||||

@ -281,3 +281,26 @@ fn lex_comments() {

|

||||

}

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn lex_manually() {

|

||||

let file = b"'a'\n#comment\n#comment again\n| continue";

|

||||

let mut lex_state = LexState {

|

||||

input: file,

|

||||

output: Vec::new(),

|

||||

error: None,

|

||||

span_offset: 10,

|

||||

};

|

||||

assert_eq!(lex_n_tokens(&mut lex_state, &[], &[], false, 1), 1);

|

||||

assert_eq!(lex_state.output.len(), 1);

|

||||

assert_eq!(lex_n_tokens(&mut lex_state, &[], &[], false, 5), 5);

|

||||

assert_eq!(lex_state.output.len(), 6);

|

||||

// Next token is the pipe.

|

||||

// This shortens the output because it exhausts the input before it can

|

||||

// compensate for the EOL tokens lost to the line continuation

|

||||

assert_eq!(lex_n_tokens(&mut lex_state, &[], &[], false, 1), -1);

|

||||

assert_eq!(lex_state.output.len(), 5);

|

||||

assert_eq!(file.len(), lex_state.span_offset - 10);

|

||||

let last_span = lex_state.output.last().unwrap().span;

|

||||

assert_eq!(&file[last_span.start - 10..last_span.end - 10], b"continue");

|

||||

}

|

||||

|

||||

@ -2478,3 +2478,56 @@ mod operator {

|

||||

);

|

||||

}

|

||||

}

|

||||

|

||||

mod record {

|

||||

use super::*;

|

||||

|

||||

use nu_protocol::ast::RecordItem;

|

||||

|

||||

#[rstest]

|

||||

#[case(b"{ :: x }", "Invalid literal")] // Key is bare colon

|

||||

#[case(b"{ a: x:y }", "Invalid literal")] // Value is bare word with colon

|

||||

#[case(b"{ a: x('y'):z }", "Invalid literal")] // Value is bare string interpolation with colon

|

||||

#[case(b"{ ;: x }", "Parse mismatch during operation.")] // Key is a non-item token

|

||||

#[case(b"{ a: || }", "Parse mismatch during operation.")] // Value is a non-item token

|

||||

fn refuse_confusing_record(#[case] expr: &[u8], #[case] error: &str) {

|

||||

dbg!(String::from_utf8_lossy(expr));

|

||||

let engine_state = EngineState::new();

|

||||

let mut working_set = StateWorkingSet::new(&engine_state);

|

||||

parse(&mut working_set, None, expr, false);

|

||||

assert_eq!(

|

||||

working_set.parse_errors.first().map(|e| e.to_string()),

|

||||

Some(error.to_string())

|

||||

);

|

||||

}

|

||||

|

||||

#[rstest]

|

||||

#[case(b"{ a: 2024-07-23T22:54:54.532100627+02:00 b:xy }")]

|

||||

fn parse_datetime_in_record(#[case] expr: &[u8]) {

|

||||

dbg!(String::from_utf8_lossy(expr));

|

||||

let engine_state = EngineState::new();

|

||||

let mut working_set = StateWorkingSet::new(&engine_state);

|

||||

let block = parse(&mut working_set, None, expr, false);

|

||||

assert!(working_set.parse_errors.first().is_none());

|

||||

let pipeline_el_expr = &block

|

||||

.pipelines

|

||||

.first()

|

||||

.unwrap()

|

||||

.elements

|

||||

.first()

|

||||

.unwrap()

|

||||

.expr

|

||||

.expr;

|

||||

dbg!(pipeline_el_expr);

|

||||

match pipeline_el_expr {

|

||||

Expr::FullCellPath(v) => match &v.head.expr {

|

||||

Expr::Record(fields) => assert!(matches!(

|

||||

fields[0],

|

||||

RecordItem::Pair(_, Expression { ty: Type::Date, .. })

|

||||

)),

|

||||

_ => panic!("Expected record head"),

|

||||

},

|

||||

_ => panic!("Expected full cell path"),

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

Reference in New Issue

Block a user