Go from the ill-defined `enable/disable` pairs to `.use_...` builders

This alleviates unclear properties when the underlying enhancements are

enabled. Now they are enabed when entering `Reedline::read_line` and

disabled when exiting that.

Furthermore allow setting `$env.config.use_kitty_protocol` to have an

effect when toggling during runtime. Previously it was only enabled when

receiving a value from `config.nu`. I kept the warning code there to not

pollute the log. We could move it into the REPL-loop if desired

Not sure if we should actively block the enabling of `bracketed_paste`

on Windows. Need to test what happens if it just doesn't do anything we

could remove the `cfg!` switch. At least for WSL2 Windows Terminal

already supports bracketed paste. `target_os = windows` is a bad

predictor for `conhost.exe`.

Depends on https://github.com/nushell/reedline/pull/659

(pointing to personal fork)

Closes https://github.com/nushell/nushell/issues/10982

Supersedes https://github.com/nushell/nushell/pull/10998

# Description

Fixes: #11033

Sorry for the issue, it's a regression which introduce by this pr:

#10456.

And this pr is going to fix it.

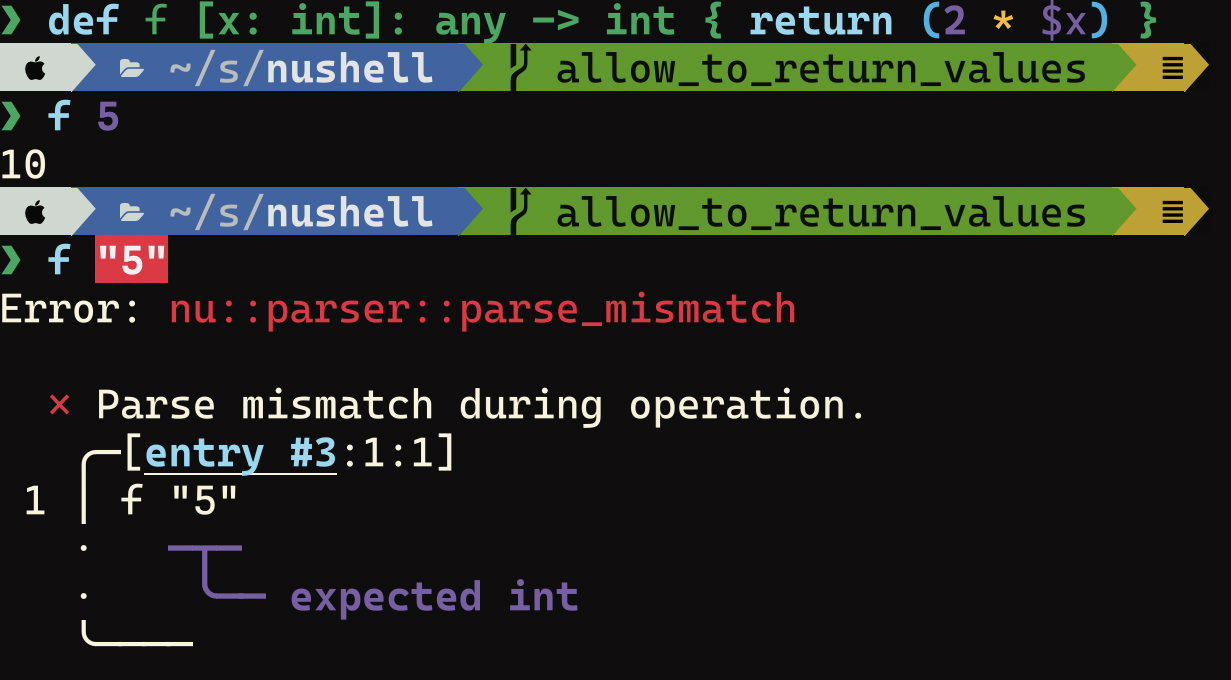

About the change: create a new field named `type_annotated` for

`Arg::Flag` and `Arg::Signature` instead of `arg_explicit_type`

variable.

When we meet a type in `TypeMode`, we set `type_annotated` field of the

argument to be true, then we know that if the arg have a annotated type

easily

We have three different `rustix` minor versions. For two of them with

their previous patch versions a security advisory has been released:

https://github.com/advisories/GHSA-c827-hfw6-qwvm

Bump the patch versions, to resolve the potential vulnerability.

At the moment we haven't assessed the potential impact on nushell,

whether we are exposed to the underlying issue.

The `color-backtrace` crate does not seem to either handle the terminal

modes well or operate in a way that the unwinding has not yet succeeded

to reach the backup disablement of the terminal raw mode in

`reedline::Reedline`'s `Drop` implementation.

This reverts commit d838871063.

Fixes#11029

This pull request fixes the tests for the `cargo.exe check` command. The

tests were failing due `cargo check -h` sometimes reporting `cargo.exe`

as the binary and thus not containing `cargo check` in the output.

The fix involves using the `Command` module from the `std::process`

library to run the command and comparing its output to the expected

output. No changes were made to the codebase itself.

# Description

Refactors the `flatten` command to remove a bunch of cloning. This was

down by passing ownership of the `Value` to `flat_value`, removing the

lifetime on `TableInside`, and using `Vec<Record>` in `FlattenedRows`

instead of a pair of `Vec` of columns and values.

For the quick benchmark below, it seems to be twice as fast now:

```nushell

let data = ls crates | where type == dir | each { ls $'($in.name)/**/*' }

timeit { for x in 0..1000 { $data | flatten } }

```

This took 550ms on v0.86.0 and only 230ms on this PR.

But considering that

```nushell

timeit { for x in 0..1000 { $data } }

```

takes 200ms on both versions, then the difference for `flatten` itself

is really 250ms vs 30ms -- 8x faster.

Provides support for reading Polars structs. This allows opening of

supported files (jsonl, parquet, etc) that contain rows with structured

data.

The following attached json lines

file([receipts.jsonl.gz](https://github.com/nushell/nushell/files/13311476/receipts.jsonl.gz))

contains a customer column with structured data. This json lines file

can now be loaded via `dfr open` and will render as follows:

<img width="525" alt="Screenshot 2023-11-09 at 10 09 18"

src="https://github.com/nushell/nushell/assets/56345/4b26ccdc-c230-43ae-a8d5-8af88a1b72de">

This also addresses some cleanup of date handling and utilizing

timezones where provided.

This pull request only addresses reading data from polars structs. I

will address converting nushell data to polars structs in a future

request as this change is large enough as it is.

---------

Co-authored-by: Jack Wright <jack.wright@disqo.com>

# Description

Generally elide a bunch of unnecessary clones. Both globally stopping to

clone the whole input data in a bunch of places where we need to read it

but also some minor places where we currently cloned.

As part of that, we can make the overwriting with `keep-all` and

`keep-last` inplace so the items don't need to be removed and repushed

to the record.

# Benchmarking

```nu

timeit { scope commands | transpose -r }

```

Before ~24 ms now just ~5 ms

# User-Facing Changes

This can change the order of apperance in the transposed record with

`--keep-last`/`--keep-all`. Now the

order is determined by the first appearance and not by the last

appearance in the ingoing columns.

This mirrors the behavior when not passed `keep-all` or `keep-last`.

# Tests + Formatting

Sadly the `transpose` command is so far undertested for more complex

operations.

# Description

I've had a few PRs fail clippy in CI after they pass `toolkit check pr`

because the clippy settings are different. This brings `toolkit.nu` into

alignment with CI and leaves notes to prompt future synchronization.

# User-Facing Changes

N/A

# Tests + Formatting

`cargo` output elided:

```

❯ toolkit check pr

running `toolkit fmt`

running `toolkit clippy`

running `toolkit clippy` on tests

running `toolkit clippy` on plugins

running `toolkit test`

running `toolkit test stdlib`

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

```

# After Submitting

N/A

# Description

This PR refactors `drop columns` and fixes issues #10902 and #6846.

Tables with "holes" are now handled consistently, although still

somewhat awkwardly. That is, the columns in the first row are used to

determine which columns to drop, meaning that the columns displayed all

the way to the right by `table` may not be the columns actually being

dropped. For example, `[{a: 1}, {b: 2}] | drop column` will drop column

`a` instead of `b`. Before, this would give a list of empty records.

# User-Facing Changes

`drop columns` can now take records as input.

# Description

Compatible with `Vec::truncate` and `indexmap::IndexMap::truncate`

Found useful in #10903 for `drop column`

# Tests + Formatting

Doctest with the relevant edge-cases

# Description

Add an extension trait `IgnoreCaseExt` to nu_utils which adds some case

insensitivity helpers, and use them throughout nu to improve the

handling of case insensitivity. Proper case folding is done via unicase,

which is already a dependency via mime_guess from nu-command.

In actuality a lot of code still does `to_lowercase`, because unicase

only provides immediate comparison and doesn't expose a `to_folded_case`

yet. And since we do a lot of `contains`/`starts_with`/`ends_with`, it's

not sufficient to just have `eq_ignore_case`. But if we get access in

the future, this makes us ready to use it with a change in one place.

Plus, it's clearer what the purpose is at the call site to call

`to_folded_case` instead of `to_lowercase` if it's exclusively for the

purpose of case insensitive comparison, even if it just does

`to_lowercase` still.

# User-Facing Changes

- Some commands that were supposed to be case insensitive remained only

insensitive to ASCII case (a-z), and now are case insensitive w.r.t.

non-ASCII characters as well.

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

---------

Co-authored-by: Stefan Holderbach <sholderbach@users.noreply.github.com>

# Description

Replaces the only usage of `Value::follow_cell_path_not_from_user_input`

with some `Record::get`s.

# User-Facing Changes

Breaking change for `nu-protocol`, since

`Value::follow_cell_path_not_from_user_input` was deleted.

Nushell now reports errors for when environment conversions are not

closures.

# Description

This is pretty complementary/orthogonal to @IanManske 's changes to

`Value` cellpath accessors in:

- #10925

- to a lesser extent #10926

## Steps

- Use `R.remove` in `Value.remove_data_at_cell_path`

- Pretty sound after #10875 (tests mentioned in commit message have been

removed by that)

- Update `did_you_mean` helper to use iterator

- Change `Value::columns` to return iterator

- This is not a place of honor

- Use `Record::get` in `Value::get_data_by_key`

# User-Facing Changes

None intentional, potential edge cases on duplicated columns could

change (considered undefined behavior)

# Tests + Formatting

(-)

Matches the general behavior of `Vec::drain` or

`indexmap::IndexMap::drain`:

- Drop the remaining elements (implementing the unstable `keep_rest()`

would not be compatible with something like `indexmap`)

- No `AsRef<[T]>` or `Drain::as_slice()` behavior as this would make

layout assumptions.

- `Drain: DoubleEndedIterator`

Found useful in #10903

# Description

Based of the work and discussion in #10844, this PR adds the `exec`

command for Windows. This is done by simply spawning a

`std::process::Command` and then immediately exiting via

`std::process::exit` once the child process is finished. The child

process's exit code is passed to `exit`.

# User-Facing Changes

The `exec` command is now available on Windows, and there should be no

change in behaviour for Unix systems.

# Description

Where appropriate, this PR replaces instances of

`Value::get_data_by_key` and `Value::follow_cell_path` with

`Record::get`. This avoids some unnecessary clones and simplifies the

code in some places.

Adds a special error, which is triggered by `alias foo=bar` style

commands. It adds a help string which recommends adding spaces.

Resolve#10958

---------

Co-authored-by: Jakub Žádník <kubouch@gmail.com>

# Description

Our config exists both as a `Config` struct for internal consumption and

as a `Value`. The latter is exposed through `$env.config` and can be

both set and read.

Thus we have a complex bug-prone mechanism, that reads a `Value` and

then tries to plug anything where the value is unrepresentable in

`Config` with the correct state from `Config`.

The parsing involves therefore mutation of the `Value` in a nested

`Record` structure. Previously this was wholy done manually, with

indices.

To enable deletion for example, things had to be iterated over from the

back. Also things were indexed in a bunch of places. This was hard to

read and an invitation for bugs.

With #10876 we can now use `Record::retain_mut` to traverse the records,

modify anything that needs fixing, and drop invalid fields.

# Parts:

- Error messages now consistently use the correct spans pointing to the

problematic value and the paths displayed in some messages are also

aligned with the keys used for lookup.

- Reconstruction of values has been fixed for:

- `table.padding`

- `buffer_editor`

- `hooks.command_not_found`

- `datetime_format` (partial solution)

- Fix validation of `table.padding` input so value is not set (and

underflows `usize` causing `table` to run forever with negative values)

- New proper types for settings. Fully validated enums instead of

strings:

- `config.edit_mode` -> `EditMode`

- Don't fall back to vi-mode on invalid string

- `config.table.mode` -> `TableMode`

- there is still a fall back to `rounded` if given an invalid

`TableMode` as argument to the `nu` binary

- `config.completions.algorithm` -> `CompletionAlgorithm`

- `config.error_style` -> `ErrorStyle`

- don't implicitly fall back to `fancy` when given an invalid value.

- This should also shrink the size of `Config` as instead of 4x24 bytes

those fields now need only 4x1 bytes in `Config`

- Completely removed macros relying on the scope of `Value::into_config`

so we can break it up into smaller parts in the future.

- Factored everything into smaller files with the types and helpers for

particular topics.

- `NuCursorShape` now explicitly expresses the `Inherit` setting.

conversion to option only happens at the interface to `reedline`

# Description

Since #10841 the goal is to remove the implementation details of

`Record` outside of core operations.

To this end use Record iterators and map-like accessors in a bunch of

places. In this PR I try to collect the boring cases where I don't

expect any dramatic performance impacts or don't have doubts about the

correctness afterwards

- Use checked record construction in `nu_plugin_example`

- Use `Record::into_iter` in `columns`

- Use `Record` iterators in `headers` cmd

- Use explicit record iterators in `split-by`

- Use `Record::into_iter` in variable completions

- Use `Record::values` iterator in `into sqlite`

- Use `Record::iter_mut` for-loop in `default`

- Change `nu_engine::nonexistent_column` to use iterator

- Use `Record::columns` iter in `nu-cmd-base`

- Use `Record::get_index` in `nu-command/network/http`

- Use `Record.insert()` in `merge`

- Refactor `move` to use encapsulated record API

- Use `Record.insert()` in `explore`

- Use proper `Record` API in `explore`

- Remove defensiveness around record in `explore`

- Use encapsulated record API in more `nu-command`s

# User-Facing Changes

None intentional

# Tests + Formatting

(-)

# Description

This change allows the vscode-specific ansi escape sequence of

633;P;Cwd= to be run when nushell detects that it's running inside of

vscode's terminal. Otherwise the standard OSC7 will run. This is helpful

with ctrl+g inside of vscode terminal as well.

closed#10989

/cc @CAD97

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

- Simplify `table` record highlight with `.get_mut`

- pretty straight forward

- Use record iterators in `table` abbreviation logic

- This required some rework if we go from guaranted contiguous arrays to

iterators

- Refactor `nu-table` internals to new record API

# User-Facing Changes

None intened

# Tests + Formatting

(-)

# Description

Rewrite `find` internals with the same principles as in #10927.

Here we can remove an unnecessary lookup accross all columns when not

narrowing find to particular columns

- Change `find` internal fns to use iterators

- Remove unnecessary quadratic lookup in `find`

- Refactor `find` record highlight logic

# User-Facing Changes

Should provide a small speedup when not providing `find --columns`

# Tests + Formatting

(-)

# Description

Changes the `captures` field in `Closure` from a `HashMap` to a `Vec`

and makes `Stack::captures_to_stack` take an owned `Vec` instead of a

borrowed `HashMap`.

This eliminates the conversion to a `Vec` inside `captures_to_stack` and

makes it possible to avoid clones altogether when using an owned

`Closure` (which is the case for most commands). Additionally, using a

`Vec` reduces the size of `Value` by 8 bytes (down to 72).

# User-Facing Changes

Breaking API change for `nu-protocol`.

# Description

This is easy to do with rust-analyzer, but I didn't want to just pump

these all out without feedback.

Part of #10700

# User-Facing Changes

None

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# After Submitting

N/A

---------

Co-authored-by: Stefan Holderbach <sholderbach@users.noreply.github.com>

# Description

`split-by` only works on a `Record`, the error type was updated to

match, and now uses a more-specific type. (Two type fixes for the price

of one!)

The `usage` was updated to say "record" as well

# User-Facing Changes

* Providing the wrong type to `split-by` now gives an error messages

with the correct required input type

Previously:

```

❯ ls | get name | split-by type

Error: × unsupported input

╭─[entry #267:1:1]

1 │ ls | get name | split-by type

· ─┬─

· ╰── requires a table with one row for splitting

╰────

```

With this PR:

```

❯ ls | get name | split-by type

Error: nu:🐚:type_mismatch

× Type mismatch.

╭─[entry #1:1:1]

1 │ ls | get name | split-by type

· ─┬─

· ╰── requires a record to split

╰────

```

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# After Submitting

Only generated commands need to be updated

---------

Co-authored-by: Darren Schroeder <343840+fdncred@users.noreply.github.com>

# Description

Limit the test `-p nu-command --test main

commands::run_external::redirect_combine` which uses `sh` to running on

`not(Windows)` like is done for other tests assuming unixy CLI items;

`sh` doesn't exist on Windows.

# User-Facing Changes

None; this is a change to tests only.

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# Description

@jntrnr discovered that `items` wasn't properly setting the

`eval_block_with_early_return()` block settings. This change fixes that

which allows `echo` to be redirected and therefore pass data through the

pipeline.

Without `echo`

```nushell

❯ { new: york, san: francisco } | items {|key, value| $'($key) ($value)' }

╭─┬─────────────╮

│0│new york │

│1│san francisco│

╰─┴─────────────╯

```

With `echo`

```nushell

❯ { new: york, san: francisco } | items {|key, value| echo $'($key) ($value)' }

╭─┬─────────────╮

│0│new york │

│1│san francisco│

╰─┴─────────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

This PR updates the `items` example so that it doesn't use `echo`.

`echo` now works like print unless it's being redirected, so it doesn't

send values through the pipeline anymore like the example showed.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Just throwing up this PR because color-backtrace seemed to produce more

useful backtraces. Just curious what others think.

Did this:

1. RUST_BACKTRACE=full cargo r

2. ❯ def test01 [] {

let sorted = [storm]

$sorted | range 1.. | zip ($sorted | range ..(-2))

}

3. test01

I like how it shows the code snippet.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

I committed things in my nushell folder that I shouldn't have. oops.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

This PR updates the trash dependency from 3.1.0 to 3.1.2 for better

support on FreeBSD.

closes#10961

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

These tests got orphaned and they would be a good place to test behavior

I want to add for #10867

# User-Facing Changes

None

# Tests + Formatting

Tests were updated to account for removed test infrastructure

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# After Submitting

N/A

# Description

The `PluginSignature` type supports extra usage but this was not

available in `plugin_name --help`. It also supports search terms but

these did not appear in `help commands`

New behavior show below is the "Extra usage for nu-example-1" line and

the "Search terms:" line

```

❯ nu-example-1 --help

PluginSignature test 1 for plugin. Returns Value::Nothing

Extra usage for nu-example-1

Search terms: example

Usage:

> nu-example-1 {flags} <a> <b> (opt) ...(rest)

Flags:

-h, --help - Display the help message for this command

-f, --flag - a flag for the signature

-n, --named <String> - named string

Parameters:

a <int>: required integer value

b <string>: required string value

opt <int>: Optional number (optional)

...rest <string>: rest value string

Examples:

running example with an int value and string value

> nu-example-1 3 bb

```

Search terms are also available in `help commands`:

```

❯ help commands | where name == "nu-example-1" | select name search_terms

╭──────────────┬──────────────╮

│ name │ search_terms │

├──────────────┼──────────────┤

│ nu-example-1 │ example │

╰──────────────┴──────────────╯

```

# User-Facing Changes

Users can now see plugin extra usage and search terms

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# After Submitting

N/A

Added "Use `--help` for more information." to the help of

MissingPositional error

- this PR should close

[#10946](https://github.com/nushell/nushell/issues/10946)

**Before:**

**After:**

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

---------

Co-authored-by: Denis Zorya <denis.zorya@trafigura.com>

- Replaced `start`/`end` with span.

- Fixed standard library.

- Add `help` option.

- Add a couple more errors for invalid record types.

Resolve#10914

# Description

# User-Facing Changes

- **BREAKING CHANGE:** `error make` now takes in `span` instead of

`start`/`end`:

```Nushell

error make {

msg: "Message"

label: {

text: "Label text"

span: (metadata $var).span

}

}

```

- `error make` now has a `help` argument for custom error help.

# Description

After talking to @CAD97, I decided to change these unwraps to expects.

See the comments. The bigger question is, how did unwrap pass the CI?

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Replaces the `Vec::remove` in `Record::retain_mut` with some swaps which

should eliminate the `O(n^2)` complexity due to repeated shifting of

elements.

Now the `input list` command, when nothing is selected, will return a

null instead of empty string or an empty list.

Resolves#10909.

# User-Facing Changes

`input list` now returns a `null` when nothing is selected.

# Description

Consequences of #10841

This does not yet make the assumption that columns are always

duplicated. Follow the existing logic here

- Use saner record API in `nu-engine/src/eval.rs`

- Use checked record construction in `nu-engine/src/scope.rs`

- Use `values` iterator in `nu-engine/src/scope.rs`

- Use `columns` iterator in `nu_engine::get_columns()`

- Start using record API in `value/mod.rs`

- Use `.insert` in `eval_const.rs` Record code

- Record API for `eval_const.rs` table code

# User-Facing Changes

None

# Tests + Formatting

None

# Description

Pretty much all operations/commands in Nushell assume that the column

names/keys in a record and thus also in a table (which consists of a

list of records) are unique.

Access through a string-like cell path should refer to a single column

or key/value pair and our output through `table` will only show the last

mention of a repeated column name.

```nu

[[a a]; [1 2]]

╭─#─┬─a─╮

│ 0 │ 2 │

╰───┴───╯

```

While the record parsing already either errors with the

`ShellError::ColumnDefinedTwice` or silently overwrites the first

occurence with the second occurence, the table literal syntax `[[header

columns]; [val1 val2]]` currently still allowed the creation of tables

(and internally records with more than one entry with the same name.

This is not only confusing, but also breaks some assumptions around how

we can efficiently perform operations or in the past lead to outright

bugs (e.g. #8431 fixed by #8446).

This PR proposes to make this an error.

After this change another hole which allowed the construction of records

with non-unique column names will be plugged.

## Parts

- Fix `SE::ColumnDefinedTwice` error code

- Remove previous tests permitting duplicate columns

- Deny duplicate column in table literal eval

- Deny duplicate column in const eval

- Deny duplicate column in `from nuon`

# User-Facing Changes

`[[a a]; [1 2]]` will now return an error:

```

Error: nu:🐚:column_defined_twice

× Record field or table column used twice

╭─[entry #2:1:1]

1 │ [[a a]; [1 2]]

· ┬ ┬

· │ ╰── field redefined here

· ╰── field first defined here

╰────

```

this may under rare circumstances block code from evaluating.

Furthermore this makes some NUON files invalid if they previously

contained tables with repeated column names.

# Tests + Formatting

Added tests for each of the different evaluation paths that materialize

tables.

# Description

This change allows `compact` to also compact things with empty strings,

empty lists, and empty records if the `--empty` switch is used. Let's

add a quality-of-life improvement here to just compact all this mess. If

this is a bad idea, please cite examples demonstrating why.

```

❯ [[name position]; [Francis Lead] [Igor TechLead] [Aya null]] | compact position

╭#┬─name──┬position╮

│0│Francis│Lead │

│1│Igor │TechLead│

╰─┴───────┴────────╯

❯ [[name position]; [Francis Lead] [Igor TechLead] [Aya ""]] | compact position --empty

╭#┬─name──┬position╮

│0│Francis│Lead │

│1│Igor │TechLead│

╰─┴───────┴────────╯

❯ [1, null, 2, "", 3, [], 4, {}, 5] | compact

╭─┬─────────────────╮

│0│ 1│

│1│ 2│

│2│ │

│3│ 3│

│4│[list 0 items] │

│5│ 4│

│6│{record 0 fields}│

│7│ 5│

╰─┴─────────────────╯

❯ [1, null, 2, "", 3, [], 4, {}, 5] | compact --empty

╭─┬─╮

│0│1│

│1│2│

│2│3│

│3│4│

│4│5│

╰─┴─╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Changes `FromValue` to take owned `Value`s instead of borrowed `Value`s.

This eliminates some unnecessary clones (e.g., in `call_ext.rs`).

# User-Facing Changes

Breaking API change for `nu_protocol`.

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

If an external completer is used and it returns no completions for a

filepath, we fall back to the builtin path completer.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Path completions will remain consistent with the use of an external

completer.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

- fixes#10766

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

If the partial supplied to the completion function is shorter than the

span, the cursor is in between the path, we are trying to complete an

intermediate directory. In such a case we:

- only suggest directory names

- don't append the slash since it is already present

- only complete the path till the component the cursor is on

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Intermediate directories can be completed without erasing the rest of

the path.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Reuses the existing `Closure` type in `Value::Closure`. This will help

with the span refactoring for `Value`. Additionally, this allows us to

more easily box or unbox the `Closure` case should we chose to do so in

the future.

# User-Facing Changes

Breaking API change for `nu_protocol`.

# Description

These macros simply took a `Span` and a shared reference to `Config` and

returned a Value, for better readability and reasoning about their

behavior convert them to simple function as they don't do anything

relevant with their macro powers.

# User-Facing Changes

None

# Tests + Formatting

(-)

# Description

While we have now a few ways to add items or iterate over the

collection, we don't have a way to cleanly remove items from `Record`.

This PR fixes that:

- Add `Record.remove()` to remove by key

- makes the assumption that keys are unique, so can not be used

universally, yet (see #10875 for an important example)

- Add naive `Record.retain()` for inplace removal

- This follows the two separate `retain`/`retain_mut` in the Rust std

library types, compared to the value-mutating `retain` in `indexmap`

- Add `Record.retain_mut()` for one-pass pruning

Continuation of #10841

# User-Facing Changes

None yet.

# Tests + Formatting

Doctests for the `retain`ing fun

# Description

This PR restores and old functionality that must of been broken with the

input_output_types() updating. It allows commands like this to work

again.

```nushell

open $nu.history-path |

get history.command_line |

split column ' ' cmd |

group-by cmd --to-table |

update items {|u| $u.items | length} |

sort-by items -r |

first 10 |

table -n 1

```

output

```

╭#─┬group─┬items╮

│1 │exit │ 3004│

│2 │ls │ 2591│

│3 │git │ 1678│

│4 │help │ 1549│

│5 │open │ 1374│

│6 │cd │ 1186│

│7 │cargo │ 944│

│8 │let │ 784│

│9 │source│ 755│

│10│z │ 486│

╰#─┴group─┴items╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Previously `group-by` returned a record containing each group as a

column. This data layout is hard to work with for some tasks because you

have to further manipulate the result to do things like determine the

number of items in each group, or the number of groups. `transpose` will

turn the record returned by `group-by` into a table, but this is

expensive when `group-by` is run on a large input.

In a discussion with @fdncred [several

workarounds](https://github.com/nushell/nushell/discussions/10462) to

common tasks were discussed, but they seem unsatisfying in general.

Now when `group-by --to-table` is used a table is returned with the

columns "groups" and "items" making it easier to do things like count

the number of groups (`| length`) or count the number of items in each

group (`| each {|g| $g.items | length`)

# User-Facing Changes

* `group-by` returns a `table` with "group" and "items" columns instead

of a `record` with one column per group name

# Tests + Formatting

Tests for `group-by` were updated

# After Submitting

* No breaking changes were made. The new `--to-table` switch should be

added automatically to the [`group-by`

documentation](https://www.nushell.sh/commands/docs/group-by.html)

# Description

> Our `Record` looks like a map, quacks like a map, so let's treat it

with the API for a map

Implement common methods found on e.g. `std::collections::HashMap` or

the insertion-ordered [indexmap](https://docs.rs/indexmap).

This allows contributors to not have to worry about how to get to the

relevant items and not mess up the assumptions of a Nushell record.

## Record assumptions

- `cols` and `vals` are of equal length

- for all practical purposes, keys/columns should be unique

## End goal

The end goal of the upcoming series of PR's is to allow us to make

`cols` and `vals` private.

Then it would be possible to exchange the backing datastructure to best

fit the expected workload.

This could be statically (by finding the best balance) or dynamically by

using an `enum` of potential representations.

## Parts

- Add validating explicit part constructor

`Record::from_raw_cols_vals()`

- Add `Record.columns()` iterator

- Add `Record.values()` iterator

- Add consuming `Record.into_values()` iterator

- Add `Record.contains()` helper

- Add `Record.insert()` that respects existing keys

- Add key-based `.get()`/`.get_mut()` to `Record`

- Add `Record.get_index()` for index-based access

- Implement `Extend` for `Record` naively

- Use checked constructor in `record!` macro

- Add `Record.index_of()` to get index by key

# User-Facing Changes

None directly

# Developer facing changes

You don't have to roll your own record handling and can use a familiar

API

# Tests + Formatting

No explicit unit tests yet. Wouldn't be too tricky to validate core

properties directly.

Will be exercised by the following PRs using the new

methods/traits/iterators.

# Description

Use `record!` macro instead of defining two separate `vec!` for `cols`

and `vals` when appropriate.

This visually aligns the key with the value.

Further more you don't have to deal with the construction of `Record {

cols, vals }` so we can hide the implementation details in the future.

## State

Not covering all possible commands yet, also some tests/examples are

better expressed by creating cols and vals separately.

# User/Developer-Facing Changes

The examples and tests should read more natural. No relevant functional

change

# Bycatch

Where I noticed it I replaced usage of `Value` constructors with

`Span::test_data()` or `Span::unknown()` to the `Value::test_...`

constructors. This should make things more readable and also simplify

changes to the `Span` system in the future.

# Description

as we can see in the [documentation of

`str.to_lowercase`](https://doc.rust-lang.org/std/primitive.str.html#method.to_lowercase),

not only ASCII symbols have lower and upper variants.

- `str upcase` uses the correct method to convert the string

7ac5a01e2f/crates/nu-command/src/strings/str_/case/upcase.rs (L93)

- `str downcase` incorrectly converts only ASCII characters

7ac5a01e2f/crates/nu-command/src/strings/str_/case/downcase.rs (L124)

this PR uses `str.to_lower_case` instead of `str.to_ascii_lowercase` in

`str downcase`.

# User-Facing Changes

- upcase still works fine

```nushell

~ l> "ὀδυσσεύς" | str upcase

ὈΔΥΣΣΕΎΣ

```

- downcase now works

👉 before

```nushell

~ l> "ὈΔΥΣΣΕΎΣ" | str downcase

ὈΔΥΣΣΕΎΣ

```

👉 after

```nushell

~ l> "ὈΔΥΣΣΕΎΣ" | str downcase

ὀδυσσεύς

```

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- ⚫ `toolkit test`

- ⚫ `toolkit test stdlib`

adds two tests

- `non_ascii_upcase`

- `non_ascii_downcase`

# After Submitting

# Description

looking at the [Wax documentation about

`wax::Walk.not`](https://docs.rs/wax/latest/wax/struct.Walk.html#examples),

especially

> therefore does not read directory trees from the file system when a

directory matches an [exhaustive glob

expression](https://docs.rs/wax/latest/wax/trait.Pattern.html#tymethod.is_exhaustive)

> **Important**

> in the following of this PR description, i talk about *pruning* and a

`--prune` option, but this has been changed to *exclusion* and

`--exclude` after a discussion with @fdncred.

this looks like a *pruning* operation to me, right? 😮

i wanted to make the `glob` option `--not` clearer about that, because

> -n, --not <List(String)> - Patterns to exclude from the results

from `help glob` is not very explicit about whether the search is pruned

when entering a directory matching a pattern in `--not` or just removing

it from the output 😕

## changelog

this PR proposes to rename the `glob --not` option to `glob --prune` and

make it's documentation more explicit 😋

## benchmarking

to support the *pruning* behaviour put forward above, i've run a

benchmark

1. define two closures to compare the behaviour between removing

patterns manually or using `--not`

```nushell

let where = {

[.*/\.local/.*, .*/documents/.*, .*/\.config/.*]

| reduce --fold (glob **) {|pat, acc| $acc | where $it !~ $pat}

| length

}

```

```nushell

let not = { glob ** --not [**/.local/**, **/documents/**, **/.config/**] | length }

```

2. run the two to make sure they give similar results

```nushell

> do $where

33424

```

```nushell

> do $not

33420

```

👌

3. measure the performance

```nushell

use std bench

```

```nushell

> bench --verbose --pretty --rounds 25 $not

44ms 52µs 285ns +/- 977µs 571ns

```

```nushell

> bench --verbose --pretty --rounds 5 $where

1sec 250ms 187µs 99ns +/- 8ms 538µs 57ns

```

👉 we can see that the results are (almost) the same but

`--not` is much faster, looks like pruning 😋

# User-Facing Changes

- `--not` will give a warning message but still work

- `--prune` will work just as `--not` without warning and with a more

explicit doc

- `--prune` and `--not` at the same time will give an error

# Tests + Formatting

this PR fixes the examples of `glob` using the `--not` option.

# After Submitting

prepare the removal PR and mention in release notes.

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

Implements `whoami` using the `whoami` command from uutils as backend.

This is a draft because it depends on

https://github.com/uutils/coreutils/pull/5310 and a new release of

uutils needs to be made (and the paths in `Cargo.toml` should be

updated). At this point, this is more of a proof of concept 😄

Additionally, this implements a (simple and naive) conversion from the

uutils `UResult` to the nushell `ShellError`, which should help with the

integration of other utils, too. I can split that off into a separate PR

if desired.

I put this command in the "platform" category. If it should go somewhere

else, let me know!

The tests will currently fail, because I've used a local path to uutils.

Once the PR on the uutils side is merged, I'll update it to a git path

so that it can be tested and runs on more machines than just mine.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

New `whoami` command. This might break some users who expect the system

`whoami` command. However, the result of this new command should be very

close, just with a nicer help message, at least for Linux users. The

default `whoami` on Windows is quite different from this implementation:

https://learn.microsoft.com/en-us/windows-server/administration/windows-commands/whoami

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

---------

Co-authored-by: Darren Schroeder <343840+fdncred@users.noreply.github.com>

related to

-

https://discord.com/channels/601130461678272522/614593951969574961/1162406310155923626

# Description

this PR

- does a bit of minor refactoring

- makes sure the input paths get expanded

- makes sure the input PATH gets split on ":"

- adds a test

- fixes the other tests

# User-Facing Changes

should give a better overall experience with `std path add`

# Tests + Formatting

adds a new test case to the `path_add` test and fixes the others.

# After Submitting

# Description

just noticed `$env.config.filesize.metric` is not the same in

`default_config.nu` and `config.rs`

# User-Facing Changes

filesizes will show in "binary" mode by default when using the default

config files, i.e. `kib` instead of `kb`.

# Tests + Formatting

# After Submitting

# Description

This PR corrects some help text by stating what the real delimiter is

for the `--include-path` command line parameter which is `char

record_sep` aka `\x1e`. Up to this point, this has really only been used

for the vscode extension to setup the NU_LIB_DIRS env var correctly. We

tried `:` and `;` and neither would work so we had to choose something

that wouldn't be confused so easily.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Currently the following command is broken:

```nushell

echo a o+e> 1.txt

```

It's because we don't redirect output of `echo` command. This pr is

trying to fix it.

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR fixes an overlook from a previous PR. It now correctly returns

the details on lazy records.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Describe detailed now returns the expected result.

# Description

- this PR should close#10819

# User-Facing Changes

Behaviour is similar to pre 0.86.0 behaviour of the cp command and

should as such not have a user-facing change, only compared to the

current version, were the option is readded.

# After Submitting

I guess the documentation will be automatically updated and as this

feature is no further highlighted, probably, no more work will be needed

here.

# Considerations

coreutils actually allows a third option:

```

pub enum UpdateMode {

// --update=`all`,

ReplaceAll,

// --update=`none`

ReplaceNone,

// --update=`older`

// -u

ReplaceIfOlder,

}

```

namely `ReplaceNone`, which I have not added. Also I think that

specifying `--update 'abc'` is non functional.

# Description

Fixes: #10830

The issue happened during lite-parsing, when we want to put a

`LiteElement` to a `LitePipeline`, we do nothing if relative redirection

target is empty.

So the command `echo aaa o> | ignore` will be interpreted to `echo aaa |

ignore`.

This pr is going to check and return an error if redirection target is

empty.

# User-Facing Changes

## Before

```

❯ echo aaa o> | ignore # nothing happened

```

## After

```nushell

❯ echo aaa o> | ignore

Error: nu::parser::parse_mismatch

× Parse mismatch during operation.

╭─[entry #1:1:1]

1 │ echo aaa o> | ignore

· ─┬

· ╰── expected redirection target

╰────

```

# Description

Support pattern matching against the `null` literal. Fixes#10799

### Before

```nushell

> match null { null => "success", _ => "failure" }

failure

```

### After

```nushell

> match null { null => "success", _ => "failure" }

success

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Users can pattern match against a `null` literal.

# Description

`from tsv` and `from csv` both support a `--flexible` flag. This flag

can be used to "allow the number of fields in records to be variable".

Previously, a record's invariant that `rec.cols.len() == rec.vals.len()`

could be broken during parsing. This can cause runtime errors as in

#10693. Other commands, like `select` were also affected.

The inconsistencies are somewhat hard to see, as most nushell code

assumes an equal number of columns and values.

# Before

### Fewer values than columns

```nushell

> let record = (echo "one,two\n1" | from csv --flexible | first)

# There are two columns

> $record | columns | to nuon

[one, two]

# But only one value

> $record | values | to nuon

[1]

# And printing the record doesn't show the second column!

> $record | to nuon

{one: 1}

```

### More values than columns

```nushell

> let record = (echo "one,two\n1,2,3" | from csv --flexible | first)

# There are two columns

> $record | columns | to nuon

[one, two]

# But three values

> $record | values | to nuon

[1, 2, 3]

# And printing the record doesn't show the third value!

> $record | to nuon

{one: 1, two: 2}

```

# After

### Fewer values than columns

```nushell

> let record = (echo "one,two\n1" | from csv --flexible | first)

# There are two columns

> $record | columns | to nuon

[one, two]

# And a matching number of values

> $record | values | to nuon

[1, null]

# And printing the record works as expected

> $record | to nuon

{one: 1, two: null}

```

### More values than columns

```nushell

> let record = (echo "one,two\n1,2,3" | from csv --flexible | first)

# There are two columns

> $record | columns | to nuon

[one, two]

# And a matching number of values

> $record | values | to nuon

[1, 2]

# And printing the record works as expected

> $record | to nuon

{one: 1, two: 2}

```

# User-Facing Changes

Using the `--flexible` flag with `from csv` and `from tsv` will not

result in corrupted record state.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

This is just a fixup PR. There was a describe PR that passed CI but then

later didn't pass main. This PR fixes that issue.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use std testing; testing run-tests --path

crates/nu-std"` to run the tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

- Add `detailed` flag for `describe`

- Improve detailed describe and better format when running examples.

# Rationale

For now, neither `describe` nor any of the `debug` commands provide an

easy and structured way of inspecting the data's type and more. This

flag provides a structured way of getting such information. Allows also

to avoid the rather hacky solution

```nu

$in | describe | str replace --regex '<.*' ''

```

# User-facing changes

Adds a new flag to ``describe`.

Reverts nushell/nushell#10812

This goes back to a version of `regex` and its dependencies that is

shared with a lot of our other dependencies. Before this we did not

duplicate big dependencies of `regex` that affect binary size and

compile time.

As there is no known bug or security problem we suffer from, we can wait

on receiving the performance improvements to `regex` with the rest of

our `regex` dependents.

r? @fdncred

Last one, I hope. At least short of completely redesigning `registry

query`'s interface. (Which I wouldn't implement without asking around

first.)

# Description

User-Facing Changes has the general overview. Inline comments provide a

lot of justification on specific choices. Most of the type conversions

should be reasonably noncontroversial, but expanding `REG_EXPAND_SZ`

needs some justification. First, an example of the behavior there:

```shell

> # release nushell:

> version | select version commit_hash | to md --pretty

| version | commit_hash |

| ------- | ---------------------------------------- |

| 0.85.0 | a6f62e05ae |

> registry query --hkcu Environment TEMP | get value

%USERPROFILE%\AppData\Local\Temp

> # with this patch:

> version | select version commit_hash | to md --pretty

| version | commit_hash |

| ------- | ---------------------------------------- |

| 0.86.1 | 0c5a4c991f |

> registry query --hkcu Environment TEMP | get value

C:\Users\CAD\AppData\Local\Temp

> # Microsoft CLI tooling behavior:

> ^pwsh -c `(Get-ItemProperty HKCU:\Environment).TEMP`

C:\Users\CAD\AppData\Local\Temp

> ^reg query HKCU\Environment /v TEMP

HKEY_CURRENT_USER\Environment

TEMP REG_EXPAND_SZ %USERPROFILE%\AppData\Local\Temp

```

As noted in the inline comments, I'm arguing that it makes more sense to

eagerly expand the %EnvironmentString% placeholders, as none of

Nushell's path functionality will interpret these placeholders. This

makes the behavior of `registry query` match the behavior of pwsh's

`Get-ItemProperty` registry access, and means that paths (the most

common use of `REG_EXPAND_SZ`) are actually usable.

This does *not* break nu_script's

[`update-path`](https://github.com/nushell/nu_scripts/blob/main/sourced/update-path.nu);

it will just be slightly inefficient as it will not find any

`%Placeholder%`s to manually expand anymore. But also, note that

`update-path` is currently *wrong*, as a path including

`%LocalAppData%Low` is perfectly valid and sometimes used (to go to

`Appdata\LocalLow`); expansion isn't done solely on a path segment

basis, as is implemented by `update-path`.

I believe that the type conversions implemented by this patch are

essentially always desired. But if we want to keep `registry query`

"pure", we could easily introduce a `registry get`[^get] which does the

more complete interpretation of registry types, and leave `registry

query` alone as doing the bare minimum. Or we could teach `path expand`

to do `ExpandEnvironmentStringsW`. But REG_EXPAND_SZ being the odd one

out of not getting its registry type semantics decoded by `registry

query` seems wrong.

[^get]: This is the potential redesign I alluded to at the top. One

potential change could be to make `registry get Environment` produce

`record<Path: string, TEMP: string, TMP: string>` instead of `registry

query`'s `table<name: string, value: string, type: string>`, the idea

being to make it feel as native as possible. We could even translate

between Nu's cell-path and registry paths -- cell paths with spaces do

actually work, if a bit awkwardly -- or even introduce lazy records so

the registry can be traversed with normal data manipulation ... but that

all seems a bit much.

# User-Facing Changes

- `registry query`'s produced `value` has changed. Specifically:

- ❗ Rows `where type == REG_EXPAND_SZ` now expand `%EnvironmentVarable%`

placeholders for you. For example, `registry query --hkcu Environment

TEMP | get value` returns `C:\Users\CAD\AppData\Local\Temp` instead of

`%USERPROFILE%\AppData\Local\Temp`.

- You can restore the old behavior and preserve the placeholders by

passing a new `--no-expand` switch.

- Rows `where type == REG_MULTI_SZ` now provide a `list<string>` value.

They previously had that same list, but `| str join "\n"`.

- Rows `where type == REG_DWORD_BIG_ENDIAN` now provide the correct

numeric value instead of a byte-swapped value.

- Rows `where type == REG_QWORD` now provide the correct numeric

value[^sign] instead of the value modulo 2<sup>32</sup>.

- Rows `where type == REG_LINK` now provide a string value of the link

target registry path instead of an internal debug string representation.

(This should never be visible, as links should be transparently

followed.)

- Rows `where type =~ RESOURCE` now provide a binary value instead of an

internal debug string representation.

[^sign]: Nu's `int` is a signed 64-bit integer. As such, values >=

2<sup>63</sup> will be reported as their negative two's compliment

value. This might sometimes be the correct interpretation -- the

registry does not distinguish between signed and unsigned integer values

-- but regedit and pwsh display all values as unsigned.

# Description