<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

Try to use nushell's fork for winget-pkgs publishing

# Description

This PR bumps reedline in nushell to the latest commit in the repo and

thiserror because it wouldn't compile without it, so that we can do some

quick testing to ensure there are no problems.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

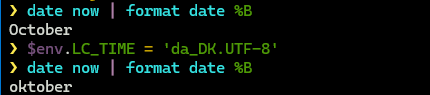

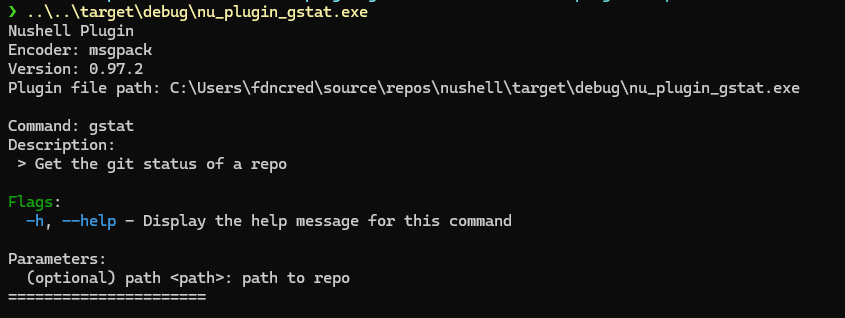

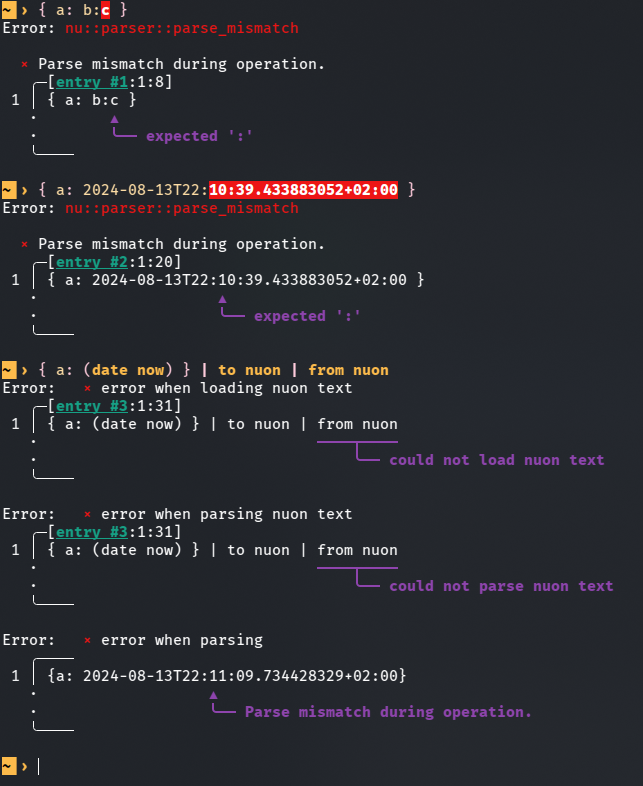

Replace example on `date now | debug` with `date now | format date

"%+"`. Add RFC3339 "%+" format string example on `format date`.

Users can now find how to format date-time to RFC3339.

FIXES: #15168

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

Documentation will now provide users examples on how to print RFC3339

strings.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Corrects documentation.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR seeks to add a quality-of-life feature that enables date and

datetime parsing of strings in `polars into-df`, `polars into-lazy`, and

`polars open`, and avoid the more verbose method of casting each column

into date/datetime. Currently, setting the schema to `date` on a `str`

column would silently error as a null column. See a comparison of the

current and proposed implementations.

The proposed implementation assumes a date format "%Y-%m-%d" and a

datetime format of "%Y-%m-%d %H:%M:%S" for naive datetimes and "%Y-%m-%d

%H:%M:%S%:z" for timezone-aware datetimes. Other formats must be

specified via parsing through `polars as-date` and `polars as-datetime`.

```nushell

# Current Implementations

> [[a]; ["2025-04-01"]] | polars into-df --schema {a: date}

╭───┬───╮

│ # │ a │

├───┼───┤

│ 0 │ │

╰───┴───╯

> [[a]; ["2025-04-01 01:00:00"]] | polars into-df --schema {a: "datetime<ns,*>"}

╭───┬───╮

│ # │ a │

├───┼───┤

│ 0 │ │

╰───┴───╯

# Proposed Implementation

> [[a]; ["2025-04-01"]] | polars into-df --schema {a: date}

╭───┬─────────────────────╮

│ # │ a │

├───┼─────────────────────┤

│ 0 │ 04/01/25 12:00:00AM │

╰───┴─────────────────────╯

> [[a]; ["2025-04-01 01:00:00"]] | polars into-df --schema {a: "datetime<ns,*>"}

╭───┬─────────────────────╮

│ # │ a │

├───┼─────────────────────┤

│ 0 │ 04/01/25 01:00:00AM │

╰───┴─────────────────────╯

> [[a]; ["2025-04-01 01:00:00-04:00"]] | polars into-df --schema {a: "datetime<ns,UTC>"}

╭───┬─────────────────────╮

│ # │ a │

├───┼─────────────────────┤

│ 0 │ 04/01/25 05:00:00AM │

╰───┴─────────────────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking changes. Users have the added option to parse string columns

into date/datetimes.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

No tests were added to any examples.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

This PR implements an experimental inter-job communication model,

through direct message passing, aka "mail"ing or "dm"ing:

- `job send <id>`: Sends a message the job with the given id, the root

job has id 0. Messages are stored in the recipient's "mailbox"

- `job recv`: Returns a stored message, blocks if the mailbox is empty

- `job flush`: Clear all messages from mailbox

Additionally, messages can be sent with a numeric tag, which can then be

filtered with `mail recv --tag`.

This is useful for spawning jobs and receiving messages specifically

from those jobs.

This PR is mostly a proof of concept for how inter-job communication

could look like, so people can provide feedback and suggestions

Closes #15199

May close#15220 since now jobs can access their own id.

# User-Facing Changes

Adds, `job id`, `job send`, `job recv` and `job flush` commands.

# Tests + Formatting

[X] TODO: Implement tests

[X] Consider rewriting some of the job-related tests to use this, to

make them a bit less fragile.

# After Submitting

# Description

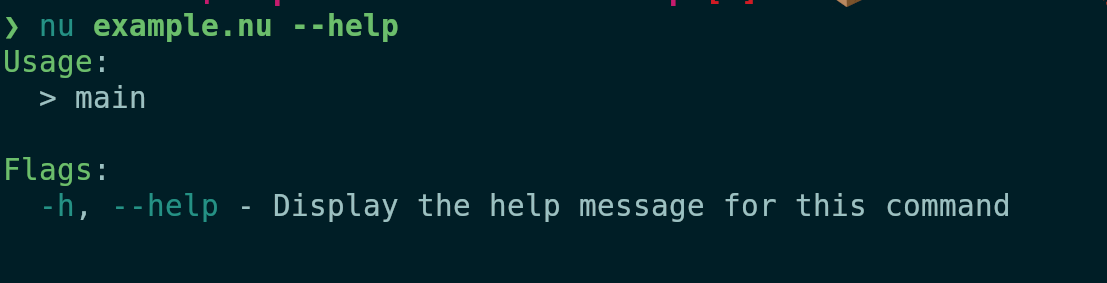

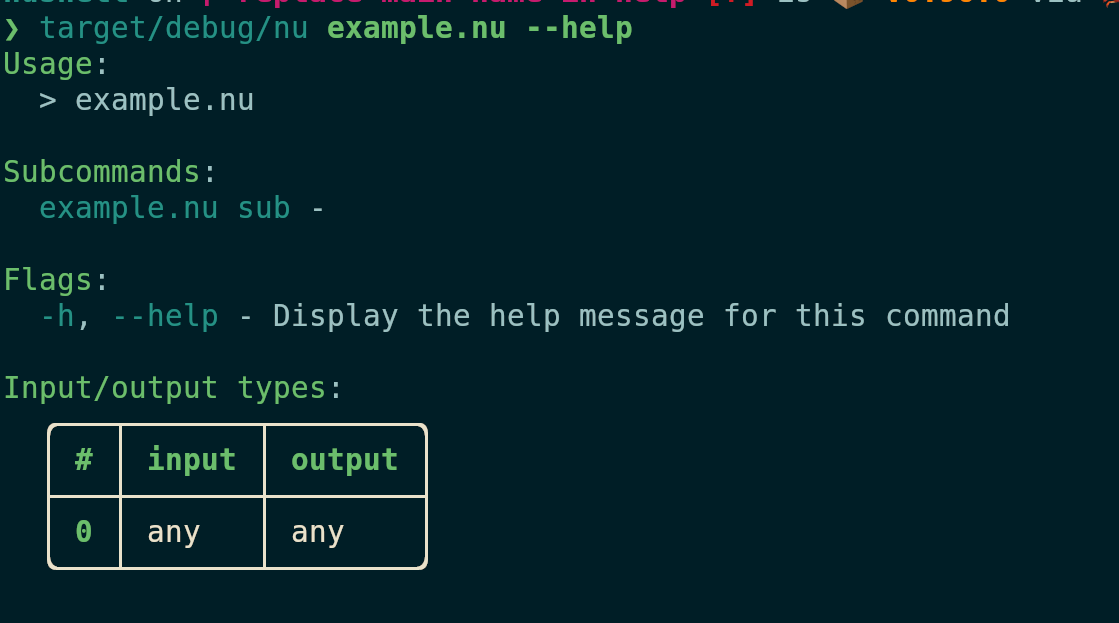

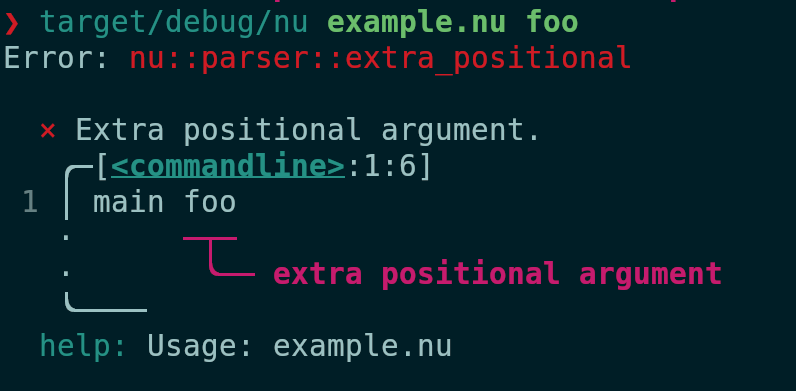

Looks like `:nu` was forgotten about when the help system was

refactored.

# User-Facing Changes

# Tests + Formatting

# After Submitting

Co-authored-by: Bahex <17417311+Bahex@users.noreply.github.com>

# Description

Fixes: #15510

I think it's introduced by #14653, which changes `and/or` to `match`

expression.

After looking into `compile_match`, it's important to collect the value

before matching this.

```rust

// Important to collect it first

builder.push(Instruction::Collect { src_dst: match_reg }.into_spanned(match_expr.span))?;

```

This pr is going to apply the logic while compiling `and/or` operation.

# User-Facing Changes

The following will raise a reasonable error:

```nushell

> (nu --testbin cococo false) and true

Error: nu:🐚:operator_unsupported_type

× The 'and' operator does not work on values of type 'string'.

╭─[entry #7:1:2]

1 │ (nu --testbin cococo false) and true

· ─┬ ─┬─

· │ ╰── does not support 'string'

· ╰── string

╰────

```

# Tests + Formatting

Added 1 test.

# After Submitting

Maybe need to update doc

https://github.com/nushell/nushell.github.io/pull/1876

---------

Co-authored-by: Stefan Holderbach <sholderbach@users.noreply.github.com>

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

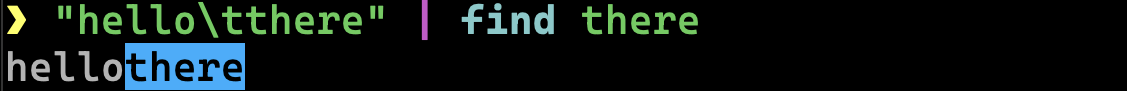

A friend of mine started using nushell on Windows and wondered why the

`cat` command wasn't available. I answered to him, that he can use `help

-f` or F1 to find the command but then we both realized that neither

`cat` nor `Get-Command` were part of `open`'s search terms. So I added

them.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

None.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

The current implementation improperly inverts the conversion from

nanoseconds to the specified time units, resulting in nonsensical

Datetime and Duration parsing and integer overflows when the specified

time unit is not nanoseconds. This PR seeks to correct this conversion

by changing the multiplication to an integer division. Below are

examples highlighting the current and proposed implementations.

## Current Implementation

Specifying a different time unit incorrectly changes the returned value.

```nushell

> [[a]; [2024-04-01]] | polars into-df --schema {a: "datetime<ns,UTC>"}

╭───┬───────────────────────╮

│ # │ a │

├───┼───────────────────────┤

│ 0 │ 04/01/2024 12:00:00AM │

> [[a]; [2024-04-01]] | polars into-df --schema {a: "datetime<ms,UTC>"}

╭───┬───────────────────────╮

│ # │ a │

├───┼───────────────────────┤

│ 0 │ 06/27/2035 11:22:33PM │ <-- changing the time unit should not change the actual value

> [[a]; [1day]] | polars into-df --schema {a: "duration<ns>"}

╭───┬────────────────╮

│ # │ a │

├───┼────────────────┤

│ 0 │ 86400000000000 │

╰───┴────────────────╯

> [[a]; [1day]] | polars into-df --schema {a: "duration<ms>"}

╭───┬──────────────────────╮

│ # │ a │

├───┼──────────────────────┤

│ 0 │ -5833720368547758080 │ <-- i64 overflow

╰───┴──────────────────────╯

```

## Proposed Implementation

```nushell

> [[a]; [2024-04-01]] | polars into-df --schema {a: "datetime<ns,UTC>"}

╭───┬───────────────────────╮

│ # │ a │

├───┼───────────────────────┤

│ 0 │ 04/01/2024 12:00:00AM │

╰───┴───────────────────────╯

> [[a]; [2024-04-01]] | polars into-df --schema {a: "datetime<ms,UTC>"}

╭───┬───────────────────────╮

│ # │ a │

├───┼───────────────────────┤

│ 0 │ 04/01/2024 12:00:00AM │

╰───┴───────────────────────╯

> [[a]; [1day]] | polars into-df --schema {a: "duration<ns>"}

╭───┬────────────────╮

│ # │ a │

├───┼────────────────┤

│ 0 │ 86400000000000 │

╰───┴────────────────╯

> [[a]; [1day]] | polars into-df --schema {a: "duration<ms>"}

╭───┬──────────╮

│ # │ a │

├───┼──────────┤

│ 0 │ 86400000 │

╰───┴──────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No user-facing breaking change.

Developer breaking change: to mitigate the silent overflow in

nanoseconds conversion functions `nanos_from_timeunit` and

`nanos_to_timeunit` (new), the function signatures were changed from

`i64` to `Result<i64, ShellError>`.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

No additional examples were added, but I'd be happy to add a few if

needed. The covering tests just didn't fit well into any examples.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR enables the option to set a column type to `decimal` in the

`--schema` parameter of `polars into-df` and `polars into-lazy`

commands. This option was already available in `polars open`, which used

the underlying polars io commands that already accounted for decimal

types when specified in the schema.

See below for a comparison of the current and proposed implementation.

```nushell

# Current Implementation

> [[a b]; [1 1.618]]| polars into-df -s {a: u8, b: 'decimal<4,3>'}

Error: × Error creating dataframe: Unsupported type: Decimal(Some(4), Some(3))

# Proposed Implementation

> [[a b]; [1 1.618]]| polars into-df -s {a: u8, b: 'decimal<4,3>'} | polars schema

╭───┬──────────────╮

│ a │ u8 │

│ b │ decimal<4,3> │

╰───┴──────────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking change. Users has the new option to specify decimal in

`--schema` in `polars into-df` and `polars into-lazy`.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

An example in `polars into-df` was modified to showcase the decimal

type.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

On Windows, I would like to be able to call a script directly in nushell

and have that script be found in the PATH and run based on filetype

associations and PATHEXT.

There have been previous discussions related to this feature, see

https://github.com/nushell/nushell/issues/6440 and

https://github.com/nushell/nushell/issues/15476. The latter issue is

only a few weeks old, and after taking a look at it and the resultant PR

I found that currently nushell is hardcoded to support only running

nushell (.nu) scripts in this way.

This PR seeks to make this functionality more generic. Instead of

checking that the file extension is explicitly `NU`, it instead checks

that it **is not** one of `COM`, `EXE`, `BAT`, `CMD`, or `PS1`. The

first four of these are extensions that Windows can figure out how to

run on its own. This is implied by the output of `ftype` for any of

these extensions, which shows that files are just run without a calling

command anyway.

```

>ftype batfile

batfile="%1" %*

```

PS1 files are ignored because they are handled as a special in later

logic.

In implementing this I initially tried to fetch the value of PATHEXT and

confirm that the file extension was indeed in PATHEXT. But I determined

that because `which()` respects PATHEXT, this would be redundant; any

executable that is found by `which` is already going to have an

extension in PATHEXT. It is thus only necessary to check that it isn't

one of the few extensions that should be called directly, without the

use of `cmd.exe`.

There are some small formatting changes to `run_external.rs` in the PR

as a result of running `cargo fmt` that are not entirely related to the

code I modified. I can back out those changes if that is desired.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Behavior for `.nu` scripts will not change. Users will still need to

ensure they have PATHEXT and filetype associations set correctly for

them to work, but this will now also apply to scripts of other types.

Fixes#14660

# Description

Fixed an issue where tables with empty values were incorrectly replaced

with [table X row] when converted to Markdown using the ```to md```

command.

Empty values are now replaced with whitespaces to preserve the original

table structure.

Additionally, fixed a missing newline (\n) between tables when using

--per-element in a list.

Removed (\n) from 2 examples for consistency.

Example:

```

For the list

let list = [ {name: bob, age: 21} {name: jim, age: 20} {name: sarah}]

Running "$list | to md --pretty" outputs:

| name | age |

| ----- | --- |

| bob | 21 |

| jim | 20 |

| sarah | |

------------------------------------------------------------------------------------------------

For the list

let list = [ {name: bob, age: 21} {name: jim, age: 20} {name: sarah} {name: timothy, age: 50} {name: paul} ]

Running "$list | to md --per-element --pretty" outputs:

| name | age |

| ------- | --- |

| bob | 21 |

| jim | 20 |

| timothy | 50 |

| name |

| ----- |

| sarah |

| paul |

```

# User-Facing Changes

The ```to md``` behaves as expected when piping a table that contains

empty values showing all rows and the empty items replaced with

whitespace.

# Tests + Formatting

Added 2 test cases to cover both issues.

fmt + clippy OK.

# After Submitting

The command documentation needs to be updated with an example for when

you want to "separate list into markdown tables"

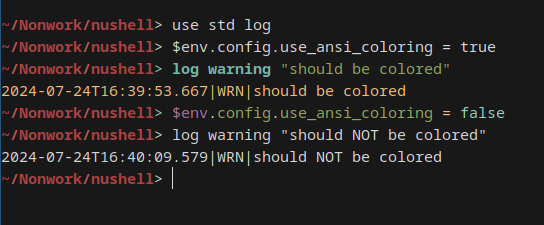

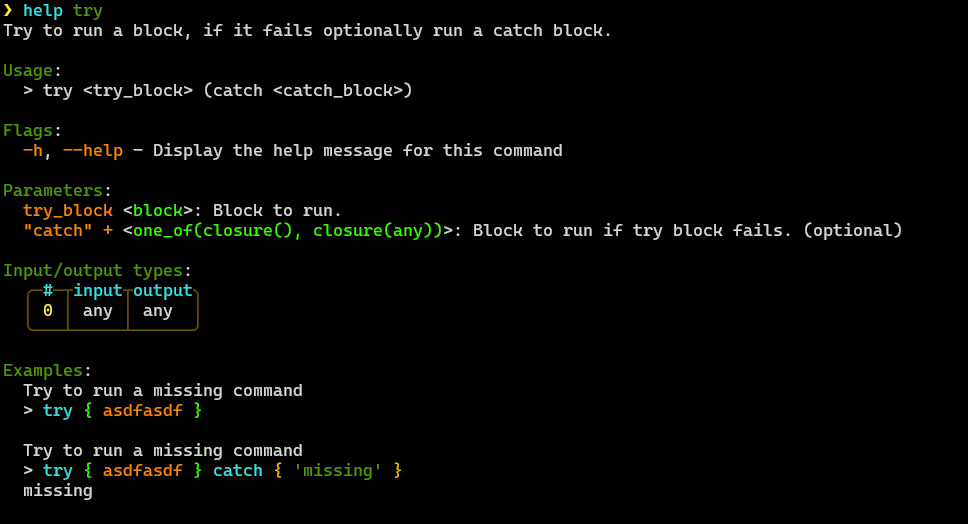

# Description

I was playing around with the `debug` command and wanted to add this

information to it but since most of it already existed in `describe` I

wanted to try and add it here. It adds a few more details that are

hopefully helpful. It mainly tries to add the value type, rust datatype,

and value. I'm not sure all of this is wanted or needed but I thought it

was an interesting introspection idea.

### Before

### After

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

Try to fixes https://github.com/nushell/nushell/issues/15326 in another

way.

The main point of this change is to avoid duplicate `write` and `close`

a redirected file. So during compile, if compiler know current element

is a sub-expression(defined by private `is_subexpression` function), it

will no longer invoke `finish_redirection`.

In this way, we can avoid duplicate `finish_redirection`.

# User-Facing Changes

`(^echo aa) o> /tmp/aaa` will no longer raise an error.

Here is the IR after the pr:

```

# 3 registers, 12 instructions, 11 bytes of data

# 1 file used for redirection

0: load-literal %1, string("aaa")

1: open-file file(0), %1, append = false

2: load-literal %1, glob-pattern("echo", no_expand = false)

3: load-literal %2, glob-pattern("true", no_expand = false)

4: push-positional %1

5: push-positional %2

6: redirect-out file(0)

7: redirect-err caller

8: call decl 135 "run-external", %0

9: write-file file(0), %0

10: close-file file(0)

11: return %0

```

# Tests + Formatting

Added 3 tests.

# After Submitting

Maybe need to update doc

https://github.com/nushell/nushell.github.io/pull/1876

---------

Co-authored-by: Stefan Holderbach <sholderbach@users.noreply.github.com>

Fixes#15559

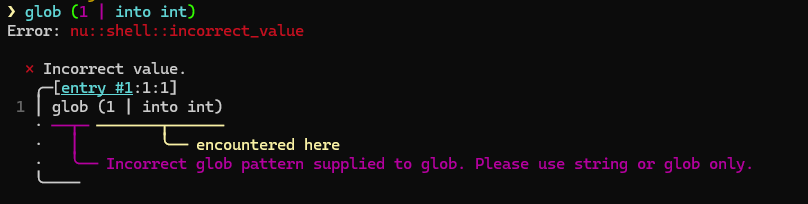

# Description

The glob command wasn't working correctly with symlinks in the /sys

filesystem. This commit adds a new flag that allows users to explicitly

control whether symlinks should be followed, with special handling for

the /sys directory.

The issue was that the glob command didn't follow symbolic links when

traversing the /sys filesystem, resulting in an empty list even though

paths should be found. This implementation adds a new

`--follow-symlinks` flag that explicitly enables following symlinks. By

default, it now follows symlinks in most paths but has special handling

for /sys paths where the flag is required.

Example:

`

# Before: This would return an empty list on Linux systems

glob /sys/devices/system/cpu/cpu*/cpufreq/scaling_governor

# Now: This works as expected with the new flag

glob /sys/devices/system/cpu/cpu*/cpufreq/scaling_governor

--follow-symlinks

`

# User-Facing Changes

1. Added the --follow-symlinks (-l) flag to the glob command that allows

users to explicitly control whether symbolic links should be followed

2. Added a new example to the glob command help text demonstrating the

use of this flag

# Tests + Formatting

1. Added a test for the new --follow-symlinks flag

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

closes#15610 .

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

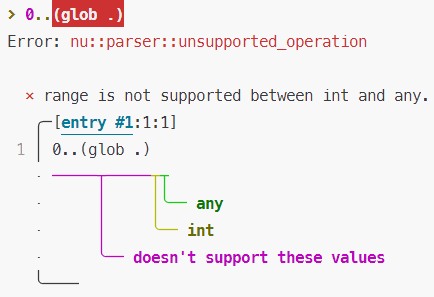

This PR attempts to improve the performance of `std/log *` by making the

following changes:

1. use explicit piping instead of `reduce` for constructing the log

message

2. constify `log-level`, `log-ansi`, `log-types` etc.

3. use `.` instead of `get` to access `$env` fields

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Nothing.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

---------

Co-authored-by: Ben Yang <ben@ya.ng>

Co-authored-by: suimong <suimong@users.noreply.github.com>

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

Contrary to the underlying implementation in polars rust/python, `polars

pivot` throws an error if the user tries to pivot on multiple columns of

different types. This PR seeks to remove this type-check. See comparison

below.

```nushell

# Current implementation: throws error when pivoting on multiple values of different types.

> [[name subject date test_1 test_2 grade_1 grade_2]; [Cady maths 2025-04-01 98 100 A A] [Cady physics 2025-04-01 99 100 A A] [Karen maths 2025-04-02 61 60 D D] [Karen physics 2025-04-02 58 60 D D]] | polars into-df | polars pivot --on [subject] --index [name] --values [test_1 grade_1]

Error: × Merge error

╭─[entry #291:1:271]

1 │ [[name subject date test_1 test_2 grade_1 grade_2]; [Cady maths 2025-04-01 98 100 A A] [Cady physics 2025-04-01 99 100 A A] [Karen maths 2025-04-02 61 60 D D] [Karen physics 2025-04-02 58 60 D D]] | polars into-df | polars pivot --on [subject] --index [name] --values [test_1 grade_1]

· ───────┬──────

· ╰── found different column types in list

╰────

help: datatypes i64 and str are incompatible

# Proposed implementation

> [[name subject date test_1 test_2 grade_1 grade_2]; [Cady maths 2025-04-01 98 100 A A] [Cady physics 2025-04-01 99 100 A A] [Karen maths 2025-04-02 61 60 D D] [Karen physics 2025-04-02 58 60 D D]] | polars into-df | polars pivot --on [subject] --index [name] --values [test_1 grade_1]

╭───┬───────┬──────────────┬────────────────┬───────────────┬─────────────────╮

│ # │ name │ test_1_maths │ test_1_physics │ grade_1_maths │ grade_1_physics │

├───┼───────┼──────────────┼────────────────┼───────────────┼─────────────────┤

│ 0 │ Cady │ 98 │ 99 │ A │ A │

│ 1 │ Karen │ 61 │ 58 │ D │ D │

╰───┴───────┴──────────────┴────────────────┴───────────────┴─────────────────╯

```

Additionally, this PR ports over the `separator` parameter in `pivot`,

which allows the user to specify how to delimit multiple `values` column

names:

```nushell

> [[name subject date test_1 test_2 grade_1 grade_2]; [Cady maths 2025-04-01 98 100 A A] [Cady physics 2025-04-01 99 100 A A] [Karen maths 2025-04-02 61 60 D D] [Karen physics 2025-04-02 58 60 D D]] | polars into-df | polars pivot --on [subject] --index [name] --values [test_1 grade_1] --separator /

╭───┬───────┬──────────────┬────────────────┬───────────────┬─────────────────╮

│ # │ name │ test_1/maths │ test_1/physics │ grade_1/maths │ grade_1/physics │

├───┼───────┼──────────────┼────────────────┼───────────────┼─────────────────┤

│ 0 │ Cady │ 98 │ 99 │ A │ A │

│ 1 │ Karen │ 61 │ 58 │ D │ D │

╰───┴───────┴──────────────┴────────────────┴───────────────┴─────────────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Soft breaking change: where a user may have previously expected an error

(pivoting on multiple columns with different types), no error is thrown.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

Examples were added to `polars pivot`.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

Fixes#15528

# Description

Fixed `kv set` passing the pipeline input to the closure instead of the

value stored in that key.

# User-Facing Changes

Now `kv set` will pass the value in that key to the closure.

# Tests + Formatting

# After Submitting

When combined with [the Cookbook

update](https://github.com/nushell/nushell.github.io/pull/1878), this

resolves#15452

# Description

When we removed the startup `ENV_CONVERSION` for path, as noted in the

issue above, we removed the ability for users to access this closure for

other purposes. This PR adds the PATH closures back as a `std` commands

that outputs a record of closures (similar to `ENV_CONVERSIONS`).

# User-Facing Changes

Doc will be updated and users can once again easily access `direnv`

# Tests + Formatting

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

# After Submitting

Doc PR to be merged when released in 0.104

Fixes#13546

# Description

Previously, outer joins would remove rows without join columns, since

the "did not match" logic only executed when the row had the join

column.

To solve this, missing join columns are now treated the same as "exists

but did not match" cases. The logic now executes both when the join

column doesn't exist and when it exists but doesn't match, ensuring rows

without join columns are preserved. If the join column is not defined at

all, the previous behavior remains unchanged.

Example:

```

For the tables:

let left_side = [{a: a1 ref: 1} {a: a2 ref: 2} {a: a3}]

let right_side = [[b ref]; [b1 1] [b2 2] [b3 3]]

Running "$left_side | join -l $right_side ref" now outputs:

╭───┬────┬─────┬────╮

│ # │ a │ ref │ b │

├───┼────┼─────┼────┤

│ 0 │ a1 │ 1 │ b1 │

│ 1 │ a2 │ 2 │ b2 │

│ 2 │ a3 │ │ │

╰───┴────┴─────┴────╯

```

# User-Facing Changes

The ```join``` command will behave more similarly to SQL-style joins. In

this case, rows that lack the join column are preserved.

# Tests + Formatting

Added 2 test cases.

fmt + clippy OK.

# After Submitting

I don't believe anything is necessary.

# Description

Fixes: #14048

The issue happened when re-using a ***module file***, and the overlay

already has already saved `PWD`, then nushell restores the `PWD`

variable after activating it.

This pr is going to fix it by restoring `PWD` after re-using a module

file.

# User-Facing Changes

`overlay use spam.nu` will always keep `PWD`, if `spam.nu` itself

doesn't change `PWD` while activating.

# Tests + Formatting

Added 2 tests.

# After Submitting

NaN

# Description

This PR implements job tagging through the usage of a new `job tag`

command and a `--tag` for `job spawn`

Closes#15354

# User-Facing Changes

- New `job tag` command

- Job list may now have an additional `tag` column for the tag of jobs

(rows representing jobs without tags do not have this column filled)

- New `--tag` flag for `job spawn`

# Tests + Formatting

Integration tests are provided to test the newly implemented features

# After Submitting

Possibly document job tagging in the jobs documentation

# Description

Enable socks-proxy feature in ureq.

This allows use of socks protocol in proxy env variables when using

nushell http client.

eg. to use a socks5 proxy on localhost

```

ALL_PROXY=socks5://localhost:8080 http get ...

```

# User-Facing Changes

None

# Tests + Formatting

# After Submitting

Closes#15543

# Description

1. Simplify code in ``datetime.rs`` based on a suggestion in my last PR

on "datetime from record"

1. Make ``into duration`` work with durations inside a record, provided

as a cell path

1. Make ``into duration`` work with durations as record

# User-Facing Changes

```nushell

# Happy paths

~> {d: '1hr'} | into duration d

╭───┬─────╮

│ d │ 1hr │

╰───┴─────╯

~> {week: 10, day: 2, sign: '+'} | into duration

10wk 2day

# Error paths and invalid usage

~> {week: 10, day: 2, sign: 'x'} | into duration

Error: nu:🐚:incorrect_value

× Incorrect value.

╭─[entry #4:1:26]

1 │ {week: 10, day: 2, sign: 'x'} | into duration

· ─┬─ ──────┬──────

· │ ╰── encountered here

· ╰── Invalid sign. Allowed signs are +, -

╰────

~> {week: 10, day: -2, sign: '+'} | into duration

Error: nu:🐚:incorrect_value

× Incorrect value.

╭─[entry #5:1:17]

1 │ {week: 10, day: -2, sign: '+'} | into duration

· ─┬ ──────┬──────

· │ ╰── encountered here

· ╰── number should be positive

╰────

~> {week: 10, day: '2', sign: '+'} | into duration

Error: nu:🐚:only_supports_this_input_type

× Input type not supported.

╭─[entry #6:1:17]

1 │ {week: 10, day: '2', sign: '+'} | into duration

· ─┬─ ──────┬──────

· │ ╰── only int input data is supported

· ╰── input type: string

╰────

~> {week: 10, unknown: 1} | into duration

Error: nu:🐚:unsupported_input

× Unsupported input

╭─[entry #7:1:1]

1 │ {week: 10, unknown: 1} | into duration

· ───────────┬────────── ──────┬──────

· │ ╰── Column 'unknown' is not valid for a structured duration. Allowed columns are: week, day, hour, minute, second, millisecond, microsecond, nanosecond, sign

· ╰── value originates from here

╰────

~> {week: 10, day: 2, sign: '+'} | into duration --unit sec

Error: nu:🐚:incompatible_parameters

× Incompatible parameters.

╭─[entry #2:1:33]

1 │ {week: 10, day: 2, sign: '+'} | into duration --unit sec

· ──────┬────── ─────┬────

· │ ╰── the units should be included in the record

· ╰── got a record as input

╰────

```

# Tests + Formatting

- Add examples and integration tests for ``into duration``

- Add one test for ``into duration``

# After Submitting

If this is merged in time, I'll update my PR on the "datetime handling

highlights" for the release notes.

Closes#12858

# Description

As explained in the ticket, easy to reproduce. Example: 1.07 minute is

1.07*60=64.2 secondes

```nushell

# before - wrong

> 1.07min

1min 4sec

# now - right

> 1.07min

1min 4sec 200ms

```

# User-Facing Changes

Bug is fixed when using ``into duration``.

# Tests + Formatting

Added a test for ``into duration``

Fixed ``parse_long_duration`` test: we gained precision 😄

# After Submitting

Release notes? Or blog is enough? Let me know

# Description

Fixes a regression caused by #15567, where I made the space detection in

command names switched from `get_span_content` to `get_decl().name()`,

which is slightly faster but it won't work in some cases:

e.g.

```nushell

use std/assert

assert equal

```

Reverted in this PR.

# User-Facing Changes

None

# Tests + Formatting

Refined

# After Submitting

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR is a follow-up to the previous PR #15557 and part of a wider

campaign to enable certain polars commands that only operated on the

entire dataframe to also operate on expressions. Here, we enable two

commands `polars as-date` and `polars as-datetime` to receive

expressions as inputs so that they may be used on specific columns in a

dataframe with multiple columns of different types. See examples below.

```nushell

> [[a b]; ["2025-04-01" 1] ["2025-04-02" 2] ["2025-04-03" 3]] | polars into-df | polars select (polars col a | polars as-date %Y-%m-%d) b | polars collect

╭───┬───────────────────────┬───╮

│ # │ a │ b │

├───┼───────────────────────┼───┤

│ 0 │ 04/01/2025 12:00:00AM │ 1 │

│ 1 │ 04/02/2025 12:00:00AM │ 2 │

│ 2 │ 04/03/2025 12:00:00AM │ 3 │

╰───┴───────────────────────┴───╯

> seq date -b 2025-04-01 --periods 4 --increment 25min -o "%Y-%m-%d %H:%M:%S" | polars into-df | polars select (polars col 0 | polars as-datetime "%Y-%m-%d %H:%M:%S") | polars collect

╭───┬───────────────────────╮

│ # │ 0 │

├───┼───────────────────────┤

│ 0 │ 04/01/2025 12:00:00AM │

│ 1 │ 04/01/2025 12:25:00AM │

│ 2 │ 04/01/2025 12:50:00AM │

│ 3 │ 04/01/2025 01:15:00AM │

╰───┴───────────────────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking changes. Users have the additional option to use `polars

as-date` and `polars as-datetime` in expressions that operate on

specific columns.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

Examples have been added to `polars as-date` and `polars as-datetime`.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR fixes an issue where, for custom values, the `//` operator was

incorrectly mapped to `Math::Divide` instead of `Math::FloorDivide`.

This PR also fixes the same mis-mapping in the `polars` plugin.

```nushell

> [[a b c]; [x 1 1.1] [y 2 2.2] [z 3 3.3]] | polars into-df | polars select {div: ((polars col c) / (polars col b)), floor_div: ((polars col c) // (polars col b))} | polars collect

╭───┬───────┬───────────╮

│ # │ div │ floor_div │

├───┼───────┼───────────┤

│ 0 │ 1.100 │ 1.000 │

│ 1 │ 1.100 │ 1.000 │

│ 2 │ 1.100 │ 1.000 │

╰───┴───────┴───────────╯

```

**Note:** the number of line changes in this PR is inflated because of

auto-formatting in `nu_plugin_polars/Cargo.toml`. Substantively, I've

only added the `round_series` feature to the polars dependency list.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

Breaking change: users who expected the operator `//` to function the

same as `/` for custom values will not get the expected result.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

No tests were yet added, but let me know if we should put something into

one of the polars examples.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR adds the exponent operator ("**") to polars expressions.

```nushell

> [[a b]; [6 2] [4 2] [2 2]] | polars into-df | polars select a b {c: ((polars col a) ** 2)}

╭───┬───┬───┬────╮

│ # │ a │ b │ c │

├───┼───┼───┼────┤

│ 0 │ 6 │ 2 │ 36 │

│ 1 │ 4 │ 2 │ 16 │

│ 2 │ 2 │ 2 │ 4 │

╰───┴───┴───┴────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking changes. Users are enabled to use the `**` operator in

polars expressions.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

An example in `polars select` was modified to showcase the `**`

operator.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

This adds a new option `--raw-value`/`-v` to the `debug` command to

allow you to only get the debug string part of the nushell value.

Because, sometimes you don't need the span or nushell datatype and you

just want the val part.

You can see the difference between `debug -r` and `debug -v` here.

It should work on all datatypes except Value::Error and Value::Closure.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR seeks to expand `polars col` functionality to allow selecting

multiple columns and columns by type, which is particularly useful when

piping to subsequent expressions that should be applied to each column

selected (e.g., `polars col int --type | polars sum` as a shorthand for

`[(polars col a | polars sum), (polars col b | polars sum)]`). See

examples below.

```nushell

# Select multiple columns (cannot be used with asterisk wildcard)

> [[a b c]; [x 1 1.1] [y 2 2.2] [z 3 3.3]] | polars into-df

| polars select (polars col b c | polars sum) | polars collect

╭───┬───┬──────╮

│ # │ b │ c │

├───┼───┼──────┤

│ 0 │ 6 │ 6.60 │

╰───┴───┴──────╯

# Select multiple columns by types (cannot be used with asterisk wildcard)

> [[a b c]; [x o 1.1] [y p 2.2] [z q 3.3]] | polars into-df

| polars select (polars col str f64 --type | polars max) | polars collect

╭───┬───┬───┬──────╮

│ # │ a │ b │ c │

├───┼───┼───┼──────┤

│ 0 │ z │ q │ 3.30 │

╰───┴───┴───┴──────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking changes. Users have the additional capability to select

multiple columns in `polars col`.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

Examples have been added to `polars col`.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

In this PR I added the flag `--plugins` to the `testing.nu` file inside

of `crates/nu-std`. This allows running tests with active plugins. While

I did not use it here in this repo, it allows testing in

[nushell/plugin-examples](https://github.com/nushell/plugin-examples)

with plugins.

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

None, just the additional flag.

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib`

(nothing broke \o/)

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR lifts the constraint that expressions in the `polars group-by`

command must be limited only to the type `Expr::Column` rather than most

`Expr` types, which is what the underlying polars crate allows. This

change enables more complex expressions to group by.

In the example below, we group by even or odd days of column `a`. While

we can reach the same result by creating and grouping by a new column in

two separate steps, integrating these steps in a single group-by allows

for better delegation to the polars optimizer.

```nushell

# Group by an expression and perform an aggregation

> [[a b]; [2025-04-01 1] [2025-04-02 2] [2025-04-03 3] [2025-04-04 4]]

| polars into-lazy

| polars group-by (polars col a | polars get-day | $in mod 2)

| polars agg [

(polars col b | polars min | polars as "b_min")

(polars col b | polars max | polars as "b_max")

(polars col b | polars sum | polars as "b_sum")

]

| polars collect

| polars sort-by a

╭───┬───┬───────┬───────┬───────╮

│ # │ a │ b_min │ b_max │ b_sum │

├───┼───┼───────┼───────┼───────┤

│ 0 │ 0 │ 2 │ 4 │ 6 │

│ 1 │ 1 │ 1 │ 3 │ 4 │

╰───┴───┴───────┴───────┴───────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking changes. The user is empowered to use more complex

expressions in `polars group-by`

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

An example is added to `polars group-by`.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

<!--

if this PR closes one or more issues, you can automatically link the PR

with

them by using one of the [*linking

keywords*](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue#linking-a-pull-request-to-an-issue-using-a-keyword),

e.g.

- this PR should close #xxxx

- fixes #xxxx

you can also mention related issues, PRs or discussions!

-->

# Description

<!--

Thank you for improving Nushell. Please, check our [contributing

guide](../CONTRIBUTING.md) and talk to the core team before making major

changes.

Description of your pull request goes here. **Provide examples and/or

screenshots** if your changes affect the user experience.

-->

This PR directly ports the polars function `polars.Expr.dt.truncate`

(https://docs.pola.rs/api/python/stable/reference/expressions/api/polars.Expr.dt.truncate.html),

which rounds a datetime to an arbitrarily specified period length. This

function is particularly useful when rounding to variable period lengths

such as months or quarters. See below for examples.

```nushell

# Truncate a series of dates by period length

> seq date -b 2025-01-01 --periods 4 --increment 6wk -o "%Y-%m-%d %H:%M:%S" | polars into-df | polars as-datetime "%F %H:%M:%S" --naive | polars select datetime (polars col datetime | polars truncate 5d37m | polars as truncated) | polars collect

╭───┬───────────────────────┬───────────────────────╮

│ # │ datetime │ truncated │

├───┼───────────────────────┼───────────────────────┤

│ 0 │ 01/01/2025 12:00:00AM │ 12/30/2024 04:49:00PM │

│ 1 │ 02/12/2025 12:00:00AM │ 02/08/2025 09:45:00PM │

│ 2 │ 03/26/2025 12:00:00AM │ 03/21/2025 02:41:00AM │

│ 3 │ 05/07/2025 12:00:00AM │ 05/05/2025 08:14:00AM │

╰───┴───────────────────────┴───────────────────────╯

# Truncate based on period length measured in quarters and months

> seq date -b 2025-01-01 --periods 4 --increment 6wk -o "%Y-%m-%d %H:%M:%S" | polars into-df | polars as-datetime "%F %H:%M:%S" --naive | polars select datetime (polars col datetime | polars truncate 1q5mo | polars as truncated) | polars collect

╭───┬───────────────────────┬───────────────────────╮

│ # │ datetime │ truncated │

├───┼───────────────────────┼───────────────────────┤

│ 0 │ 01/01/2025 12:00:00AM │ 09/01/2024 12:00:00AM │

│ 1 │ 02/12/2025 12:00:00AM │ 09/01/2024 12:00:00AM │

│ 2 │ 03/26/2025 12:00:00AM │ 09/01/2024 12:00:00AM │

│ 3 │ 05/07/2025 12:00:00AM │ 05/01/2025 12:00:00AM │

╰───┴───────────────────────┴───────────────────────╯

```

# User-Facing Changes

<!-- List of all changes that impact the user experience here. This

helps us keep track of breaking changes. -->

No breaking changes. This PR introduces a new command `polars truncate`

# Tests + Formatting

<!--

Don't forget to add tests that cover your changes.

Make sure you've run and fixed any issues with these commands:

- `cargo fmt --all -- --check` to check standard code formatting (`cargo

fmt --all` applies these changes)

- `cargo clippy --workspace -- -D warnings -D clippy::unwrap_used` to

check that you're using the standard code style

- `cargo test --workspace` to check that all tests pass (on Windows make

sure to [enable developer

mode](https://learn.microsoft.com/en-us/windows/apps/get-started/developer-mode-features-and-debugging))

- `cargo run -- -c "use toolkit.nu; toolkit test stdlib"` to run the

tests for the standard library

> **Note**

> from `nushell` you can also use the `toolkit` as follows

> ```bash

> use toolkit.nu # or use an `env_change` hook to activate it

automatically

> toolkit check pr

> ```

-->

Example test was added.

# After Submitting

<!-- If your PR had any user-facing changes, update [the

documentation](https://github.com/nushell/nushell.github.io) after the

PR is merged, if necessary. This will help us keep the docs up to date.

-->

Bumps [rust-embed](https://github.com/pyros2097/rust-embed) from 8.6.0

to 8.7.0.

<details>

<summary>Changelog</summary>

<p><em>Sourced from <a

href="https://github.com/pyrossh/rust-embed/blob/master/changelog.md">rust-embed's

changelog</a>.</em></p>

<blockquote>

<h2>[8.7.0] - 2025-04-10</h2>

<ul>

<li>add deterministic timestamps flag for deterministic builds <a

href="https://redirect.github.com/pyrossh/rust-embed/pull/259">#259</a>.

Thanks to <a href="https://github.com/daywalker90">daywalker90</a></li>

</ul>

</blockquote>

</details>

<details>

<summary>Commits</summary>

<ul>

<li>See full diff in <a

href="https://github.com/pyros2097/rust-embed/commits">compare

view</a></li>

</ul>

</details>

<br />

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't

alter it yourself. You can also trigger a rebase manually by commenting

`@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

<details>

<summary>Dependabot commands and options</summary>

<br />

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits

that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after

your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge

and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating

it. You can achieve the same result by closing it manually

- `@dependabot show <dependency name> ignore conditions` will show all

of the ignore conditions of the specified dependency

- `@dependabot ignore this major version` will close this PR and stop

Dependabot creating any more for this major version (unless you reopen

the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop

Dependabot creating any more for this minor version (unless you reopen

the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop

Dependabot creating any more for this dependency (unless you reopen the

PR or upgrade to it yourself)

</details>

Signed-off-by: dependabot[bot] <support@github.com>

Co-authored-by: dependabot[bot] <49699333+dependabot[bot]@users.noreply.github.com>

Bumps [data-encoding](https://github.com/ia0/data-encoding) from 2.8.0

to 2.9.0.

<details>

<summary>Commits</summary>

<ul>

<li><a

href="4fce77c46b"><code>4fce77c</code></a>

Release 2.9.0 (<a

href="https://redirect.github.com/ia0/data-encoding/issues/138">#138</a>)</li>

<li><a

href="d81616352a"><code>d816163</code></a>

Add encode_mut_str to guarantee UTF-8 for safe callers (<a

href="https://redirect.github.com/ia0/data-encoding/issues/137">#137</a>)</li>

<li><a

href="ec53217669"><code>ec53217</code></a>

Update doc badge in README.md (<a

href="https://redirect.github.com/ia0/data-encoding/issues/135">#135</a>)</li>

<li>See full diff in <a

href="https://github.com/ia0/data-encoding/compare/v2.8.0...v2.9.0">compare

view</a></li>

</ul>

</details>

<br />

[](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores)

Dependabot will resolve any conflicts with this PR as long as you don't

alter it yourself. You can also trigger a rebase manually by commenting

`@dependabot rebase`.

[//]: # (dependabot-automerge-start)

[//]: # (dependabot-automerge-end)

---

<details>

<summary>Dependabot commands and options</summary>

<br />

You can trigger Dependabot actions by commenting on this PR:

- `@dependabot rebase` will rebase this PR

- `@dependabot recreate` will recreate this PR, overwriting any edits

that have been made to it

- `@dependabot merge` will merge this PR after your CI passes on it

- `@dependabot squash and merge` will squash and merge this PR after

your CI passes on it

- `@dependabot cancel merge` will cancel a previously requested merge

and block automerging

- `@dependabot reopen` will reopen this PR if it is closed

- `@dependabot close` will close this PR and stop Dependabot recreating

it. You can achieve the same result by closing it manually

- `@dependabot show <dependency name> ignore conditions` will show all

of the ignore conditions of the specified dependency

- `@dependabot ignore this major version` will close this PR and stop

Dependabot creating any more for this major version (unless you reopen

the PR or upgrade to it yourself)

- `@dependabot ignore this minor version` will close this PR and stop

Dependabot creating any more for this minor version (unless you reopen

the PR or upgrade to it yourself)

- `@dependabot ignore this dependency` will close this PR and stop

Dependabot creating any more for this dependency (unless you reopen the

PR or upgrade to it yourself)

</details>

Signed-off-by: dependabot[bot] <support@github.com>

Co-authored-by: dependabot[bot] <49699333+dependabot[bot]@users.noreply.github.com>

Fixes a bug caused by #15536

Sorry about that, @fdncred

# Description

I've made the panic reproducible in the test case.

TLDR: completer will sometimes return new decl_ids outside of the range

of the engine_state passed in.

# User-Facing Changes

bug fix

# Tests + Formatting

+1

# After Submitting

# Description

Performing a `polars collect` on an eager dataframe should be a no-op

operation. However, when used with a pipeline and not saving to a value

a cache error occurs. This addresses that cache error.

# Description

This updates `string_expand()` in nu-table's util.rs to use the

`std::iter` library's `repeat_n()` function, which was suggested as a

more readable version of the existing `repeat().take()` implementation.

# User-Facing Changes

Should have no user facing changes.

# Tests + Formatting

All green circles!

```

- 🟢 `toolkit fmt`

- 🟢 `toolkit clippy`

- 🟢 `toolkit test`

- 🟢 `toolkit test stdlib

```

<!--

if this PR closes one or more issues, you can automatically link the PR

with